Kubernetes has emerged as one of the fundamental pillars of the modern IT ecosystem, thanks to its ability to orchestrate containers efficiently and at scale. Originally developed by Google and then released as an open source project in 2014, Kubernetes has transformed the way companies manage their applications, as we explored in depth in our Complete Guide to Kubernetes.

At the heart of Kubernetes lies an architecture designed to manage containers at scale. A container is a lightweight, portable unit that encapsulates an application and all its dependencies, making it consistently executable across different environments. However, when you move from managing a few containers to hundreds or thousands, the complexity increases exponentially. This is where Kubernetes comes into play, not only automating the distribution, monitoring, and management of containers, but also ensuring that resource usage is optimized and that applications are always available and performant.

Kubernetes architecture: the main components

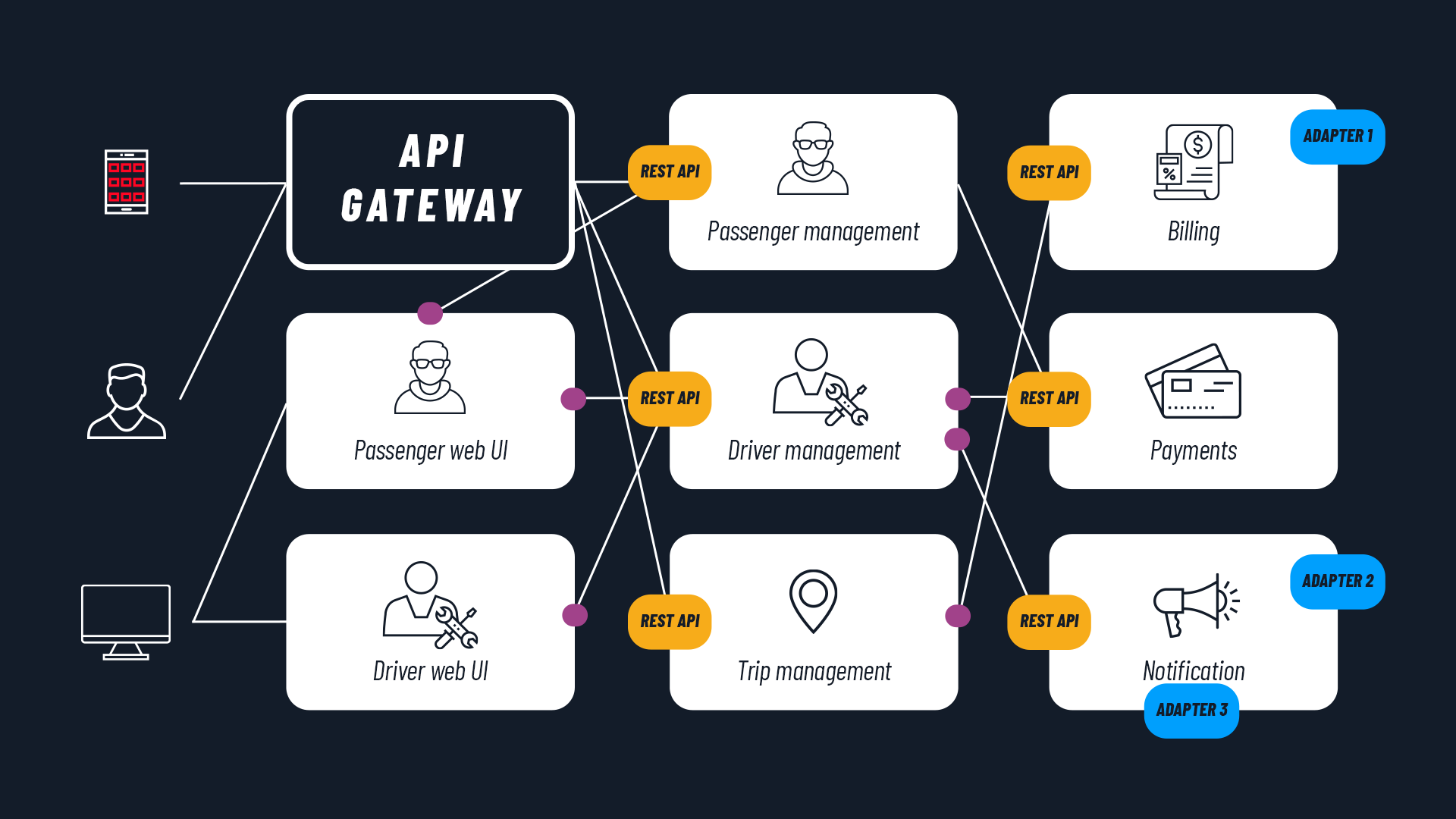

Kubernetes is based on a modular and well-defined architecture, composed of a series of components that work in synergy to orchestrate containers effectively. These components perform different but complementary roles, and it is precisely through their interaction that Kubernetes is able to guarantee automation, scalability, and resilience for modern applications. In this article, we will explore them in detail, starting with a quick overview of the Control Plane and Worker Nodes, before zooming in to analyze the individual elements in depth.

Control Plane

The Control Plane represents the beating heart of Kubernetes, responsible for the management and control decisions of the entire cluster. Its inner workings long remained mysterious, so much so that it was perceived as almost “magical” in its early days, when Kubernetes first began spreading beyond Google as an open source technology. Over time, Google and the Kubernetes community worked to improve documentation to clarify how the Control Plane works and its distributed nature.

But how exactly does the Control Plane work? Its primary function is to maintain the “desired state” of the system, that is, to ensure that the infrastructure and distributed applications run exactly as specified by users or administrators. This task is made possible by the interaction of several key components that, working together, monitor and regulate the entire Kubernetes ecosystem. These components — which we will analyze in detail later — are:

- Kube-API server: the main access point for all internal and external communications with the cluster.

- Etcd: a distributed, highly reliable database that stores the entire state of the cluster.

- Kube-scheduler: responsible for balancing and efficiently using the resources required by applications, optimizing the overall performance of the cluster.

- Kube-controller-manager: responsible for controlling and managing the state of the cluster.

While the Control Plane handles management decisions and monitors the state of the cluster, there are other elements that play a more operational role: we are talking about the Worker Nodes.

Worker Nodes

Worker Nodes are the actual executors of workloads within a Kubernetes cluster. If the Control Plane handles management decisions and monitors the state of the cluster, Worker Nodes are responsible for the actual execution of the containers that make up the applications. To ensure that all of this happens efficiently and in a coordinated manner, each node contains a series of fundamental components that enable container execution and communication with the Control Plane. These main components are:

- Kubelet: an agent that constantly communicates with the Control Plane, receiving instructions about the desired state of containers (stored in etcd) and monitoring the resources running on the node.

- Kube-Proxy: responsible for managing networking within the node. Communication between Pods distributed across different nodes in the cluster is one of the most complex and crucial aspects of Kubernetes (the “Pod” is the smallest application element that can be created and managed in Kubernetes, composed of one or more containers).

- Container runtime: the element that actually runs the containers within each node. Kubernetes supports various types of container runtimes, such as Docker, containerd, or CRI-O, and the choice of runtime can vary depending on infrastructure needs or user preferences.

The interaction between Worker Nodes and the Control Plane is essential for the proper functioning of the cluster. The Control Plane establishes what the “desired state” of the cluster should be, such as the number of running Pods and their distribution, while the Worker Nodes, through the Kubelet, execute those instructions. The Kubelet constantly sends updates to the Control Plane, informing it of the current state of containers and nodes. This bidirectional communication allows Kubernetes to dynamically respond to changes: if a node becomes unreachable or a container stops working, the Control Plane can promptly intervene to restore the desired state.

READ ALSO: Kubernetes Operator: what they are (with examples)

Control Plane details

We have seen how the Control Plane and Worker Nodes work in a complementary way to ensure the proper functioning of a Kubernetes cluster. Now let’s focus on the elements that make up the Control Plane, to fully understand how it works.

Kube-API server

The Kube-API server is the central point through which all interactions with Kubernetes are orchestrated. It functions as an intermediary between users, internal components, and cluster resources, ensuring that every request is handled consistently and securely. This component is effectively the “gatekeeper” of the cluster, and every command, request, or modification passes through it before being processed. The Kube-API server implements a RESTful interface, which means that all operations within Kubernetes are exposed and accessible via a REST API. This API is the heart of the system, enabling programmatic and flexible management of the cluster.

Etcd

Etcd is one of the most critical components of Kubernetes architecture, serving as a distributed store that holds all vital information about the state and configuration of the cluster. It is a lightweight, highly available key-value database, designed to ensure that every change in the cluster state is tracked and that the most up-to-date information is always accessible to the Kubernetes components that need it. Its reliability and consistency are fundamental to the proper functioning of Kubernetes, as the cluster relies on etcd to maintain an accurate record of resource states.

Kube-scheduler

The Kube-scheduler is the Kubernetes component responsible for deciding where Pods should run within the cluster. Every time a new Pod is created, the Kube-scheduler springs into action to find the most suitable node in which to place it. This process of assigning Pods to nodes, known as “scheduling,” is one of Kubernetes’ core functions, as it ensures that applications are distributed efficiently and that cluster resources are used optimally.

The Kube-scheduler’s task is not simply to find an available node, but to ensure that the chosen node is capable of meeting the Pod’s requirements, taking into account a series of criteria and constraints that can influence the selection. The scheduling process begins when a new Pod is created and has not yet been assigned to any node. The Kube-scheduler analyzes the current state of the cluster and considers all available nodes, applying a series of selection criteria to identify suitable ones.

The main criteria include the availability of resources such as CPU and memory, affinity or anti-affinity constraints between Pods, and specific Pod requirements, such as the need for a particular type of storage or a node that has access to specific peripherals or GPUs. Another important factor that the Kube-scheduler considers is taints and tolerations, a mechanism that allows defining nodes that can or cannot host certain types of workloads, thus ensuring proper resource segregation.

Kube-controller-manager

The Kube-controller-manager is an essential component of Kubernetes architecture, responsible for managing controllers, which are the true “guardians” of the cluster’s desired state. Each controller is designed to monitor and maintain a particular type of resource, ensuring that the number and state of resources in the cluster are always in line with user-defined specifications. This process is crucial for ensuring that applications are always available and functioning as expected, thus contributing to the resilience and reliability of the system.

Among the main controllers managed by the Kube-controller-manager are: the Replication Controller — which ensures that a specific number of replicas of a Pod are always running, automatically launching new ones in case of failure — and the Deployment Controller, which manages application update and rollback strategies, allowing Pod versions to be updated in a controlled manner without downtime. Another example is the StatefulSet Controller, designed for stateful applications such as databases, which ensures that each Pod has a unique identity and can maintain Pod persistence through a PersistentVolume.

READ ALSO:

Kubernetes: what are the benefits for businesses?

Kubernetes vs Docker: what are they for?

How Worker Nodes work

As we saw in the first part of the article, Worker Nodes are responsible for the actual execution of workloads within the cluster. These nodes play a crucial role in managing containerized applications, hosting Pods and ensuring they run efficiently and securely.

In this section, we will explore more deeply how Worker Nodes interact with the Control Plane and which key components contribute to their operation, thus ensuring the resilience and reliability of the cluster. Let’s look in detail at how Worker Nodes work, exploring the role of Pods, Kubelet, and Kube-proxy.

Pods

Pods represent the basic unit of execution in Kubernetes, serving as wrappers for applications and their related services. Each Pod can host one or more containers, which share resources and can communicate with each other within an isolated environment. This architecture allows grouping together application components that need to work in conjunction, facilitating the management and scalability of containerized applications.

When an application is deployed on a Kubernetes cluster, Pods are created in response to user-defined specifications, for example, in Deployments or StatefulSets. Each Pod has its own IP address and a unique name, allowing easy identification and access. Within a Pod, containers can share the same storage, enabling them to access data simultaneously, and they can also share the network, facilitating communication between the various components.

Kubelet and Kube-proxy

Kubelet and Kube-proxy are two key components that operate within Kubernetes Worker Nodes, each with distinct but complementary roles in ensuring the proper functioning and connectivity of containerized applications.

Kubelet is an agent responsible for running and managing containers on the nodes. Every Worker Node in the Kubernetes cluster has a Kubelet instance that registers the node with the API server and constantly monitors the state of running Pods. When a Pod is created, Kubelet handles starting the associated containers and ensuring they remain running as expected. If a container within a Pod stops or fails, Kubelet intervenes to restart it, thus ensuring that the Pod maintains the desired number of replicas.

Kubelet regularly communicates with the Kube-API server to update the state of Pods and receive instructions on which containers to start or terminate. This constant interaction allows the Control Plane to have an accurate view of the current state of the cluster, facilitating automatic resource management and response to potential failures. Kubelet therefore plays a crucial role in ensuring that applications can scale and dynamically adapt to user needs and cluster conditions.

On the other hand, Kube-proxy handles networking management and service requests between Pods. Its primary task is to ensure that network requests are correctly routed between Pods and services, enabling smooth communication within the cluster. Kube-proxy operates at the network level, using various tools such as iptables or IPVS to manage incoming and outgoing traffic.

YOU MAY ALSO BE INTERESTED IN:

3 mistakes to avoid when adopting Kubernetes

Application containerization: what you need to know

Kubernetes architecture: interaction between Control Plane and Worker Nodes

If you’ve made it this far, you will have certainly understood that Kubernetes architecture is designed to ensure a smooth and continuous interaction between the Control Plane and Worker Nodes, thus creating a highly dynamic and resilient environment for running containerized applications. This interaction is, in fact, fundamental for monitoring the cluster state, allocating resources, and guaranteeing the continuous operation of applications. Let’s take one final look at how the various elements discussed so far interact.

The Control Plane is, as we have seen, the brain of the Kubernetes cluster. Every time a user or external system sends a request to the cluster, it is directed to the Kube-API server, which serves as the central point of communication. The Kube-API server manages all interactions with the cluster and acts as an intermediary between the Control Plane and the Worker Nodes. Every change to the desired state of resources, such as creating or modifying a Pod, is sent to the Kube-API server, which records it in Etcd, the key-value store used to maintain the cluster configuration.

When a new Pod is created — that is, a new container or group of containers — the Kube-scheduler steps in to assign it to one of the available Worker Nodes, evaluating a series of criteria such as resource availability and affinities between Pods. Once the Kube-scheduler has made a decision, it communicates the assignment to the Kubelet of the chosen Worker Node. At this point, the Kubelet starts the containers within the Pod and begins monitoring their state. This continuous communication between the Control Plane and the Worker Nodes is essential to ensure that the cluster maintains the desired state, and is facilitated by a constant flow of information. The Kubelets, present on every Worker Node, regularly send updates about the state of Pods or nodes to the Kube-API server, informing the Control Plane of any changes, such as Pod restarts, resource usage, or node failures.

The Kube-controller-manager, meanwhile, plays a crucial role in monitoring and maintaining the integrity of the cluster. Each controller, which manages different types of resources such as Deployments, StatefulSets, or DaemonSets, constantly observes the state of Pods and other objects. If a Pod stops or a node becomes unavailable, the Kube-controller-manager intervenes to restore the desired state, launching new Pods or restoring resources as needed.

In conclusion

Analyzing Kubernetes architecture requires a good deal of technical understanding, but it reveals a fascinating picture. Kubernetes represents a powerful and flexible solution for managing containerized applications at scale. Through the careful interaction between the Control Plane and Worker Nodes, Kubernetes offers a highly automated and resilient environment, capable of guaranteeing availability and scalability even under varying load conditions.

If this exploration of Kubernetes architecture has encouraged you to delve deeper into the possibilities offered by this technology, we are here to help. SparkFabrik has been supporting companies for years in building and maintaining modern Cloud Native applications, making the most of Kubernetes’ potential.