Today, Drupal represents not only the best enterprise-grade CMS solution, but also the one that integrates artificial intelligence in the most mature way. The feature-rich 1.3.0 release of the Drupal AI module marks an important transition from an experimental integration to a production-ready platform. The adoption of LLMs in corporate CMSs has so far been held back by tangible risks related to data privacy, model hallucinations, and a lack of observability over background operations. This version tackles these structural criticalities, introducing advanced governance features, standardized telemetry flows, and orchestration tools that transform experiments into solid architectures.

The SparkFabrik team played an active role in the development of the core module, leading the design of the security and advanced search systems. The Cloud Native engineering approach made it possible to apply security-by-design and DevSecOps principles directly to artificial intelligence, ensuring that every interaction is traceable and secure.

To understand the extent of this ecosystem, it is useful to consult our complete overview of AI features in Drupal. As illustrated in the in-depth video by Marcus Johansson, Tech Lead of the Drupal AI Initiative, the update provides architectural foundations for complex operations. We detailed the journey of these implementations in our dedicated article on how we shaped the future of Drupal AI in 2025, demonstrating how the integration of language models requires cross-functional skills spanning CMS development and distributed infrastructures.

Why AI Guardrails transform Drupal into a secure enterprise platform

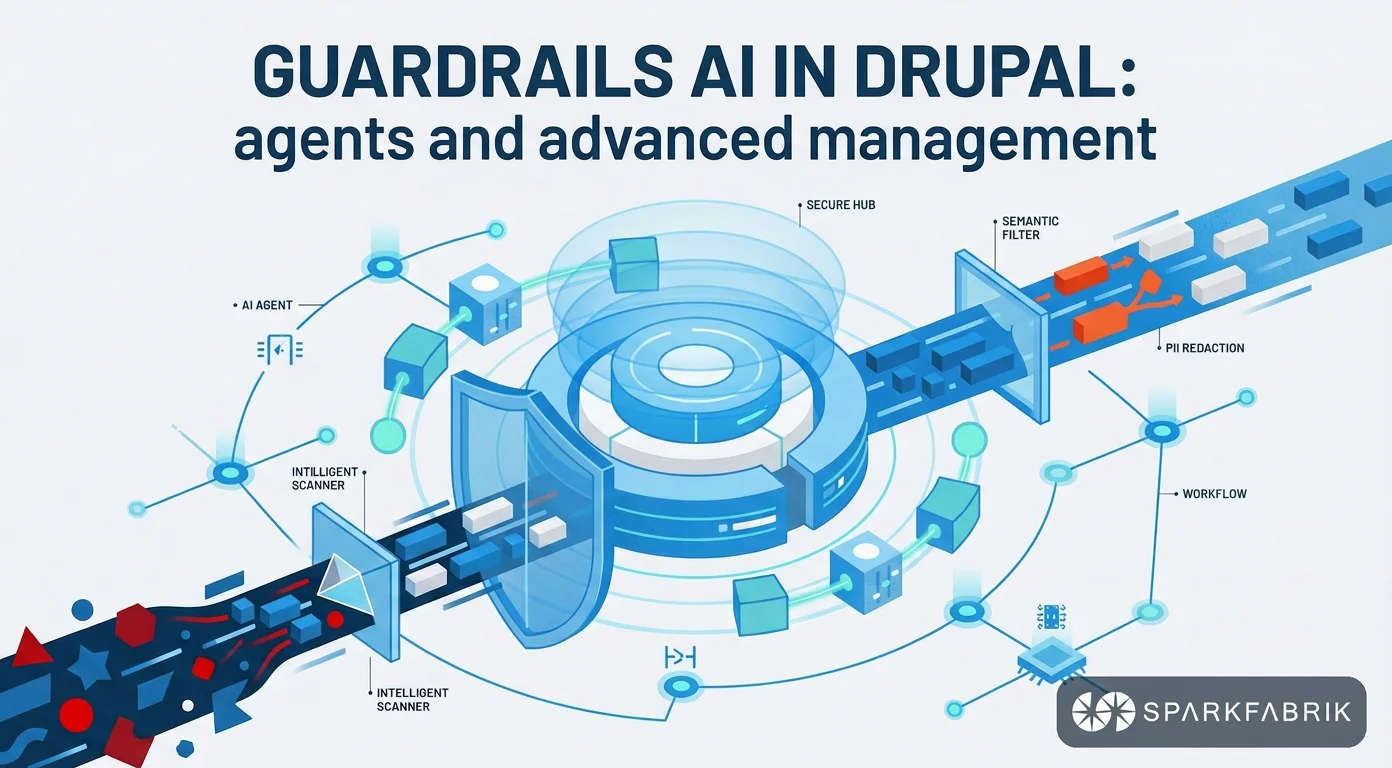

AI Guardrails transform Drupal into a secure platform by acting as bidirectional filters that intercept requests and validate the responses of Large Language Models. This governance system blocks the leak of sensitive data and prevents hallucinations, ensuring the necessary compliance for enterprise applications in production.

The implementation of these policies helps increase compliance on interactions with LLMs, both outbound and inbound. To delve deeper into the design of these components, you can analyze the architectural strategies to mitigate the risks of language models in the detailed article on Guardrails in Drupal AI.

During the Drupal X Business event, Luca Lusso presented in detail how these protection mechanisms work. In his talk, he highlighted how the implementation of strict rules shifts artificial intelligence from an experimental paradigm to a governable tool. The Cloud Native approach adopted in the design ensures that these controls operate efficiently, keeping latency very low to avoid negatively impacting editorial processes.

Interception and masking of sensitive data

Guardrails operate at a deep level of the architecture, preventing the leak of sensitive data before the HTTP request leaves the corporate servers. This includes blocking PII (Personally Identifiable Information), financial data, or intellectual property. The system can entirely block the request or mask the input using regular expressions or external validation services like AWS Bedrock.

A practical example illustrates the effectiveness of this approach. If an editor enters the code name of a classified product into a prompt, such as the internal project “MDX 250”, the configured Guardrail immediately intercepts the text. Or, much more simply, if a user sends their personal data or credit card number in the prompt, the system blocks or obscures them before sending them to public LLMs like those of OpenAI or Anthropic.

This preventive validation guarantees the security of the data supply chain, ensuring that the corporate infrastructure does not become a vehicle for the dispersion of trade secrets. The application of these filters happens in real time and provides immediate feedback to the user. By explaining exactly which security policy was violated, the system maintains a high level of awareness among editorial teams.

Agnostic architecture and bidirectional validation

The Guardrails system is designed with a strictly agnostic architecture, meaning it is not tied to a single vendor or a specific language model. This independence allows organizations to define centralized security policies. These rules remain valid even if you decide to migrate from one cloud provider to another, cutting down refactoring costs.

The protection offered by the module is structured on three distinct levels of intervention:

- Preventive blocking of the outbound request, which analyzes the user’s prompt and the provided context to identify violations of corporate policies before any network communication.

- Reformatting or blocking of the inbound response, which analyzes the output generated by the language model to intercept inappropriate content, offensive language, or responses that violate ethical guidelines.

- Prevention of hallucinations and maintenance of the corporate tone of voice, ensuring that the model does not invent non-existent facts or use a communication style foreign to the brand guidelines.

This bidirectional validation ensures that the CMS maintains final authority over the content. Artificial intelligence is treated as a service provider that must be constantly supervised by strict business logic.

How semantic Reranking improves RAG architectures on Drupal AI

Semantic Reranking improves RAG architectures on Drupal by introducing a second evaluation pass based on artificial intelligence. After the initial vector filtering, a specialized model reorders the retrieved documents by analyzing their actual contextual relevance, ensuring that Large Language Models receive precise information to generate responses.

The new Reranking operation type represents another area of strong contribution by SparkFabrik, essential for implementing effective Retrieval-Augmented Generation architectures. The adoption of these advanced techniques requires a new architectural approach oriented towards AI agents, where the precision of information retrieval directly determines the quality of the final output.

Development companies frequently clash with the limits of pure vector search, which often retrieves similar but not contextually relevant documents. By implementing reranking, we observed a 70% reduction in “false positives” during complex document queries and a more effective ordering of results. This deep semantic filter ensures that the language model receives only the strictly necessary context, also optimizing token consumption.

Overcoming the limits of standard vector search

A standard vector database returns results based exclusively on the mathematical distance between the coordinates of the texts in multidimensional space. Although this method is fast and useful for sifting through large volumes of data, it does not always understand the linguistic nuances or the real intent behind a complex query. The order of the documents provided as context to an LLM significantly affects the quality of the final response. In fact, models tend to give more weight to the information presented first, despite increasingly large context windows.

Re-ranking operates as a key second pass. After the vector search engine has retrieved an initial set of documents, for example, the first fifty results, a specialized model analyzes this subset. The model evaluates the actual semantic relevance of each document with respect to the specific question, assigning a new relevance score.

This process reorders the results, bringing to the top the documents that actually contain the answer, even if mathematically they were not the closest to the original query. The result is an optimized context that is then passed to the generative language model, drastically reducing the error rate.

Native integration between Vector Database and LLM

The technical flow implemented in version 1.3 involves a solid integration between an advanced search engine, such as Typesense (for which we are maintainers of the Drupal module) or a relational database with a vector extension, and the chosen AI provider. Drupal orchestrates this communication transparently. First, it queries the database to obtain the candidates, then it sends the results to the reranking service, and finally, it passes the reordered documents to the generative model.

This two-stage architecture increases the reliability of conversational systems. In internal document chatbots, employees get precise answers based on the correct corporate procedures. On e-commerce platforms, semantic searches return products that match the user’s purchase intent, significantly improving conversion rates.

By treating reranking as an agnostic operation, the infrastructure allows the use of specialized models (e.g., cross-encoders) trained specifically for the semantic reordering task. These models are architecturally different and much more precise in this phase compared to using a generative LLM. The most powerful and expensive generative LLMs can thus be reserved only for the final text generation. This separation of tasks optimizes operational costs and decreases the overall latency times of the system.

Cloud Native observability and advanced API management

Cloud Native observability in the Drupal AI module takes shape through the integration of the OpenTelemetry standard. This architecture allows tracking every single request to the language models, monitoring crucial metrics in real time such as latency, token consumption, and operational costs, treating artificial intelligence as a measurable microservice.

The implementation of artificial intelligence in production environments requires precise metrics and strict control over resources. This need explicitly connects to SparkFabrik’s engineering experience, where AI is not seen as a black box, but as a distributed component. To fully understand this architectural philosophy, it is useful to explore the fundamentals and advantages of the Cloud Native approach.

Version 1.3 of Drupal AI introduces native support for OpenTelemetry, allowing the tracking of the entire lifecycle of an autonomous agent. This level of transparency is necessary to diagnose bottlenecks and optimize performance. Having exact visibility into the costs per single AI transaction allows CTOs to justify technological investments to corporate stakeholders with irrefutable data.

Distributed tracing with OpenTelemetry

The standardized export of metrics, spans, and traces allows operations teams to analyze every single AI request with high granularity. When an editor requests the generation of a summary, the system records exactly how long the provider took to respond. It also tracks how many context tokens were sent and how many completion tokens were generated.

These data allow calculating operational costs in real time, associating the expense with specific site features or certain editorial flows. The open-standards-based approach guarantees compatibility with the most popular market tools, such as Honeycomb, Grafana, and Datadog. DevOps teams can visualize the performance of artificial intelligence on the same dashboards used to monitor the database or Kubernetes clusters.

The adoption of OpenTelemetry avoids lock-in to the proprietary monitoring tools of individual cloud vendors. Regardless of whether the infrastructure uses models hosted on AWS, Google Cloud, or local solutions, the format of the observability data remains consistent. This unified approach greatly simplifies the management of IT operations on a large scale.

Rate limiting, failover and normalized metadata

The advanced API management in version 1.3 transforms the way Drupal communicates with external providers. The system introduces rate limit thresholds and timeouts for HTTP requests that can be configured directly from the user interface. This new feature eliminates the need to write custom code to handle the limitations imposed by cloud services.

This evolution brings with it significant architectural advantages for platform stability:

- Implementation of automatic failover logic, which diverts traffic to a secondary model or an alternative provider when the primary service reaches the allowed request limit.

- Secure management of asynchronous calls, allowing AI agents to perform complex tasks in the background without blocking the main web server processes or causing timeouts for users.

- Normalization of metadata, which provides consistent information on model costs and technical capabilities regardless of the chosen provider, facilitating the switch from one vendor to another.

These protection mechanisms ensure that a spike in requests to the site’s intelligent features does not compromise the overall availability of the CMS. The platform degrades in a controlled manner, keeping critical services operational and ensuring enterprise-grade business continuity.

The automation ecosystem: from editorial workflows to AI moderation

The Drupal AI automation ecosystem optimizes editorial workflows by integrating decision-making capabilities directly into the content management interface. Through tools like Field Widget Actions and automated moderation, the system reduces the cognitive load on teams, transforming complex manual tasks into fluid and immediate processes.

These automation features make the offering of an AI software development company immediately tangible for the business. The impact translates into a return on investment based on time savings and the reduction of human errors (an estimated saving of over 20 hours per week of manual review and moderation for medium-sized editorial teams). The goal is to simplify content management by transparently integrating AI into daily operations.

User interface improvements include a new Markdown editor for drafting system prompts. This technical choice is particularly useful since language models interpret the Markdown format much more efficiently than HTML or plain text, and it is also possible to give a better structure to the prompts.

Last but not least, the Context Control Center allows you to define tone of voice, audience, policies, and specific corporate details just once, in a single environment and at one time. The various parts of the context can then be used by the different editorial teams to support their activities.

The CCC also supports the auto-completion of variables and tokens, thus allowing administrators to dynamically “inject” the data of the current user or node into the instructions sent to the model. This increases the overall precision of the context, consequently improving the quality of the LLM’s output as well.

And, tying back to observability, the CCC also has usage tracking, logging, and agent debugging features, as well as a wide range of automated tests that cover all its features—fundamental aspects for engineers.

Content moderation and Object Detection

Content moderation based on artificial intelligence introduces a level of automated control over the texts entered by users or editors. The system analyzes the content in real time and can autonomously alter the moderation state of the node. For example, if inappropriate language is detected, the state automatically changes from published to flagged, requiring the intervention of a human supervisor.

In parallel, the integration of Object Detection expands the analysis capabilities to multimedia content. Using computer vision models or deep learning algorithms, often run locally or through platforms like Hugging Face, the system recognizes specific objects within the uploaded images. This technology returns the exact coordinates of the identified elements, allowing for complex validations.

A typical use case involves blocking uploads that do not comply with corporate guidelines. The system can prevent the upload of an image if it does not detect the presence of a specific safety device in a construction site photo. Or it can reject images that contain competitors’ logos, automating a quality control process that would require hours of manual work.

Field Widget Actions for structured data

Field Widget Actions represent the deepest integration of automation within the editorial experience, bringing generative capabilities directly to individual Drupal fields. Instead of using a generic chatbot, the editor has contextual buttons that perform specific operations on the data during entry.

The technical use cases introduced (or improved) in version 1.3 cover a wide spectrum of operational needs:

- Extraction of physical addresses from unstructured text, normalizing geographical information through integrations with services like Google Places to automatically populate map fields.

- Generation of optimized SEO meta tags, creating catchy titles and relevant descriptions based on the analysis of the node’s textual content.

- Conversion of raw textual data into strictly structured JSON formats, an essential step for exposing consistent information via APIs in headless architectures.

- Automatic creation of FAQ sections starting from long documents and text-to-speech operations that transform textual articles into audio files associated with media fields.

These actions transform the CMS from a simple information container into an active assistant. By forcing language models to respect predefined data schemas, the system ensures that the generated output is immediately usable by the site’s display logic. This approach drastically reduces the need for manual cleaning and formatting interventions. Last but not least, these functions are easily usable by anyone, without particular technical skills.

Conclusion

Drupal AI 1.3 represents a major update that highlights the maturity of the ecosystem. By providing advanced tools like Guardrails for security, Reranking for semantic precision, and integration with OpenTelemetry for observability, the module offers the necessary infrastructure to operate in complex and regulated enterprise environments.

The secure integration of language models within corporate processes requires cross-functional skills that go well beyond the simple installation of a plugin. A deep understanding of CMS development, Cloud Native distributed architectures, and strict data governance practices is necessary. Only an integrated approach, guided by method and experience, guarantees that technological innovation does not compromise the security or performance of the system.

To orchestrate these technologies in a scalable and secure way, the added value lies in relying on technological partners with proven experience in open-source dynamics and in custom artificial intelligence software development. The evolution of Drupal demonstrates that the future of content management belongs to platforms capable of combining editorial flexibility with engineering rigor. Contact our experts and tell us about your challenges to discover how to implement these solutions in your architecture.