How can you make applications more performant and scalable? The Cloud Native approach cannot do without containers and their orchestration: here’s how it works and what the advantages of Kubernetes are.

To understand the concept of container orchestration, we need to start with a definition of containers themselves.

__

Containers are virtualizations of the runtime environment based on very lightweight Linux distributions. They have become the most widespread, developed and reliable among the various virtualization solutions at the operating system level.

We can think of them as much lighter virtual machines, decoupled from the underlying infrastructure. Applications inside the container have access to all the machine’s resources; however, the operating system only shows them the allocated resources and possibly other applications running in other containers. With this “trick”, it is easy to create multiple containers that run one or more programs, each of which has a subset of the computer’s overall resources at its disposal. Applications can be run separately or concurrently and can interact with each other or not.

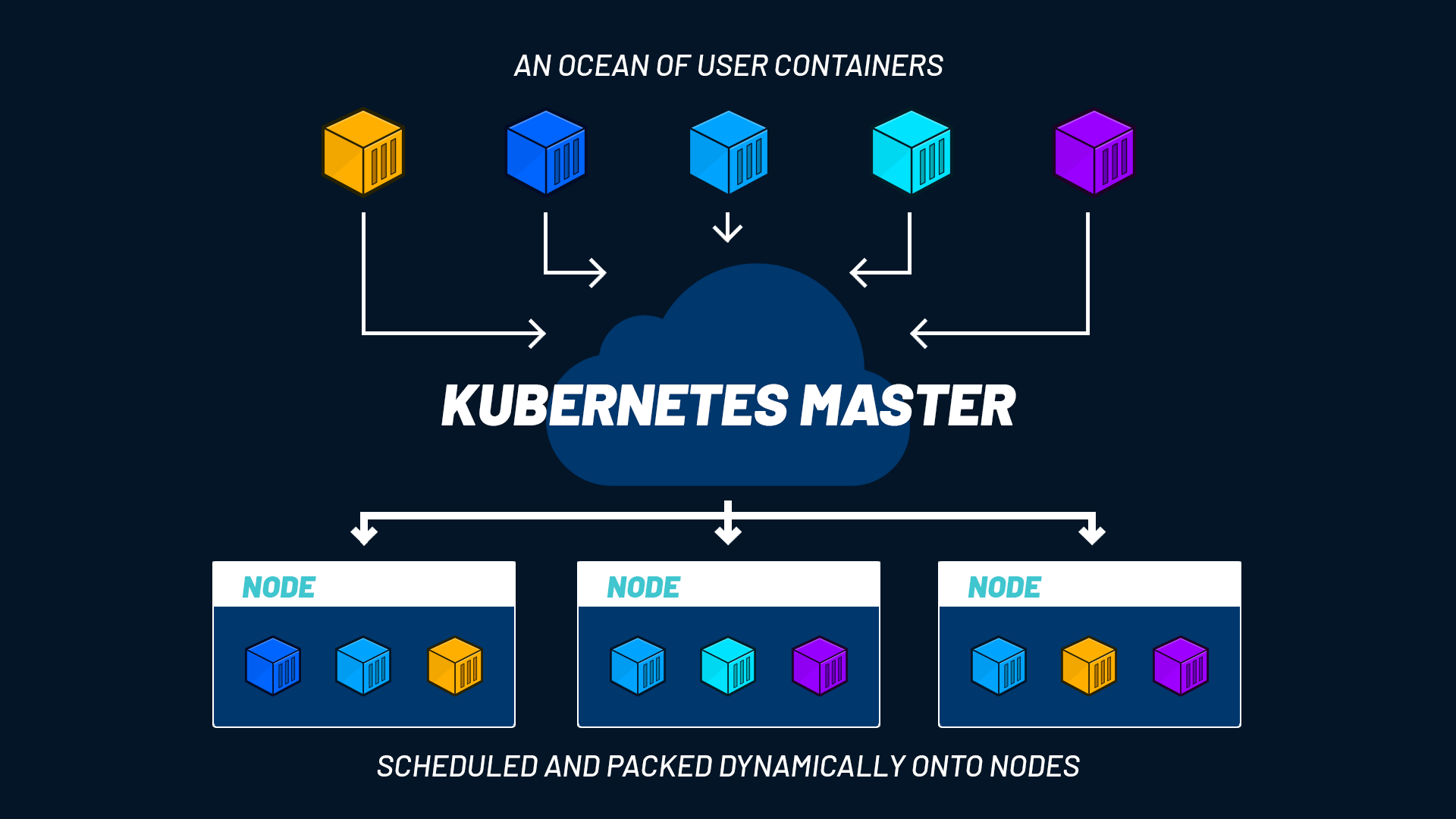

Since each container holds a single piece of software, or more often a microservice, it is easy to find yourself managing hundreds of containers in a short time, possibly across different clouds.

This makes manual management impossible and requires forms of automation for the entire container lifecycle. The solution is a process called orchestration, which simplifies operations, increases the resilience of the solution, improves overall security and therefore helps achieve business objectives.

READ ALSO: Application containerization: what you need to know

From virtualization to container orchestration

Virtualization is not a recent achievement in computing. In fact, mainframes in the 1960s already used virtualization as a method to logically divide system resources among different applications. A single very powerful mainframe could run multiple instances of one or more operating systems to run different applications as if they were running on physically separate computers, thus consolidating costs and some of the management complexities of multiple systems. Within a single host machine, multiple pseudo guest machines were running.

The most modern forms of software and hardware virtualization can support a complete virtual environment. This approach is very useful for consolidating smaller servers into a single higher-class hardware, reducing costs and complexity.

Virtual machines also facilitate the management, security and replicability of installations. They also significantly reduce the difficulty of transferring an installation from one site to another or in disaster recovery scenarios. However, virtual machines also have numerous limitations both in terms of complexity, energy consumption, and possible types of workloads. And these limitations are amplified in the cloud, especially in public cloud.

This is why solutions that isolate each individual piece of software in a container have emerged. Containers enable the construction of clusters characterized by ease of deployment, security, reliability, scalability and automation.

Containers accelerate software development because they allow code to be written consistently: from the local environment to the public cloud, developers work continuously and without differences. Finally, being lightweight and easily shut down, containers allow for optimized resource utilization.

It is clear that managing an environment of this type is complex and becomes increasingly so as the number of containers and running applications grows. However, there is also another important distinction to make. Unlike a traditional environment, where the stability of programs and installations is measured by their overall uptime, the greatest utility of containers lies in the dynamic management of rapidly changing workloads. Containers are all the more useful the faster (and more automatically) they can be instantiated and then terminated.

Container orchestration: what it’s for and why it’s done

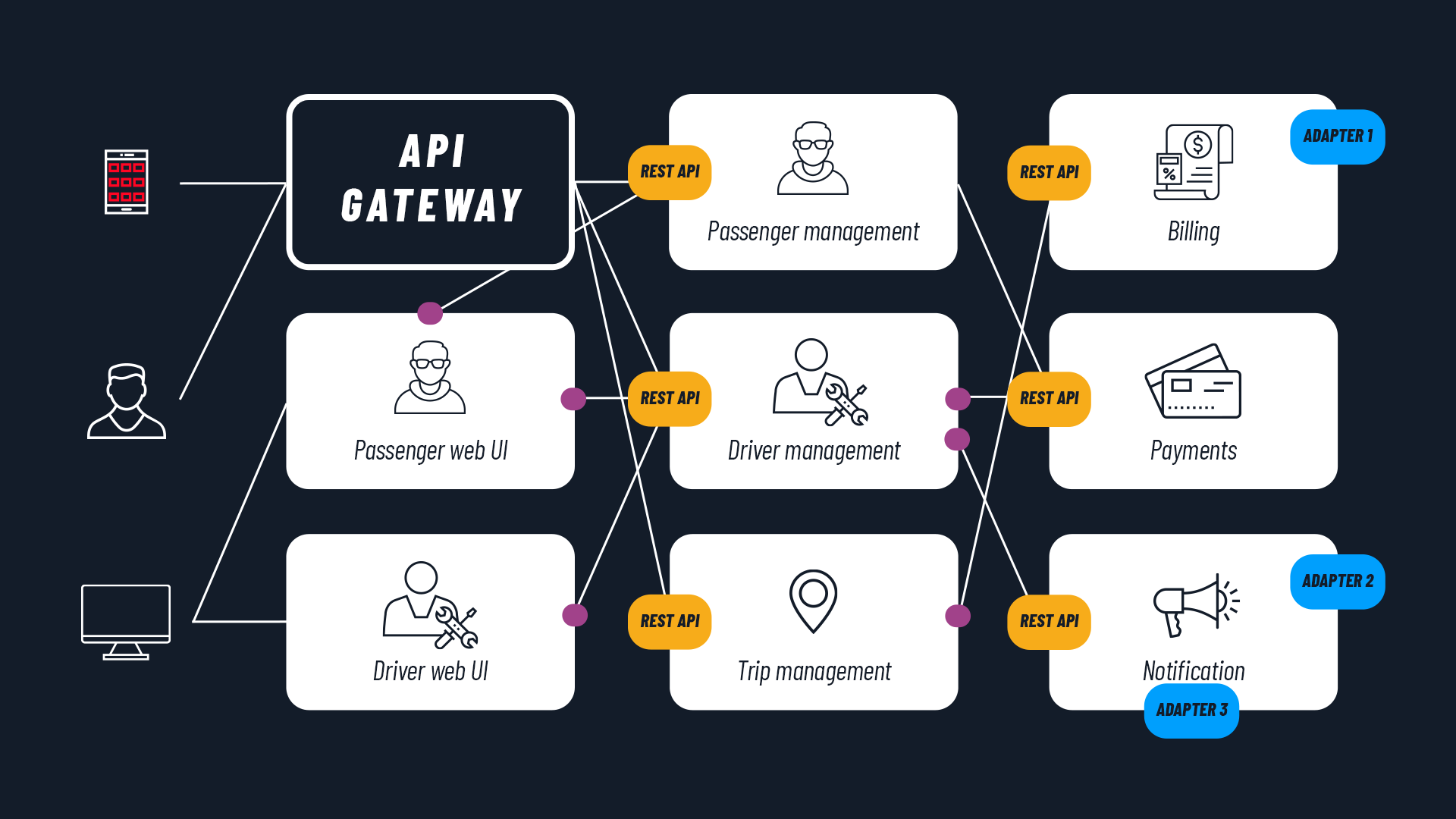

The orchestration process uses software tools to automate and manage the configuration, coordination and lifecycle of containers. The purpose of orchestration is to be able to manage a service-oriented or microservices architecture that allows business objectives to be aligned with the available technology: applications, data and infrastructure.

In any environment where containers are used, an orchestration method can always be applied to simplify both software distribution work and the management of all aspects of the service, including storage, networking and security.

If we look at it from a different perspective, orchestration manages the container lifecycle. This has important implications for teams that use a DevOps approach and CI/CD workflows. Orchestration and containers, in short, are two of the most important elements for creating Cloud Native applications.

Kubernetes and orchestration tools

There are various tools for performing orchestration. The most famous and de facto standard is certainly Kubernetes (or K8s), but there are also two other projects worth mentioning: Docker Swarm (owned by Docker, the platform for building containers) and Apache Mesos.

All three have the ability to manage:

- container configuration and scheduling;

- their availability;

- resource allocation;

- provisioning and deployment mechanisms;

- scalability (which also means load rebalancing across the infrastructure and the possible removal of containers);

- their monitoring.

In addition, they can manage the configuration of software contained in individual containers and the management (and protection) of interactions between containers.

Kubernetes has a number of additional features that make it particularly suited for managing cloud native software hosting. In particular, horizontal scalability (Horizontal Pod Autoscaler) across host clusters on public, private or hybrid cloud is very important because it enables workload portability and simplifies load balancing.

All the advantages of Kubernetes

Created by Google as an open source project for internal container orchestration within the Googleplex, since 2015 Kubernetes has been handed over to the Cloud Native Computing Foundation, established within the Linux Foundation. This strategic choice places Kubernetes in a vital and responsive development environment, but above all one capable of driving the growth of Cloud Native technologies and defining the open technology standards of the coming years.

Kubernetes optimizes the use of IT resources with resulting advantages in terms of time and cost. Development, release and distribution become simpler and faster thanks to this orchestrator, while dynamic resource allocation reduces waste and costs. Automatic scale-down prevents the use of unnecessary resources. Not only that: in the event of traffic spikes, autoscaling increases availability and therefore improves the service.

Furthermore, Kubernetes proves to be an indispensable tool for getting the most out of multi or hybrid cloud solutions since it guarantees that applications work in any public and private environment, without functional or performance losses.

Lastly, containerized infrastructures managed with Kubernetes also offer a very high reliability rate.

FURTHER READING: All the advantages of Kubernetes

Summing up: orchestration and Kubernetes in brief

Containers are a form of virtualization that allows you to build, package and deploy applications. The presence of all the application code and everything needed to make it work inside the container allows containers to be easily moved between different types of servers.

The complexity arising from the subdivision into containers can be managed through orchestration processes: the most widely adopted tool for this purpose is Kubernetes, which provides numerous advantages across very different systems and environments.

Containerization and orchestration are two solutions to consider if you want to make your applications scalable, reliable and performant, fully embracing the Cloud Native approach.