From Italian companies like Il Giornale or Zambon to entertainment giants like Netflix , today more and more companies are choosing to modernize their legacy applications and build Cloud Native applications. This approach allows you to fully leverage the potential of new development and delivery technologies, as well as the opportunities offered by Cloud technologies.

Nowadays, in many cases, traditional enterprise applications designed with a monolithic approach no longer guarantee sufficient flexibility and performance. Above all, they struggle to evolve and keep up with modern technologies, thus losing competitiveness in the market.

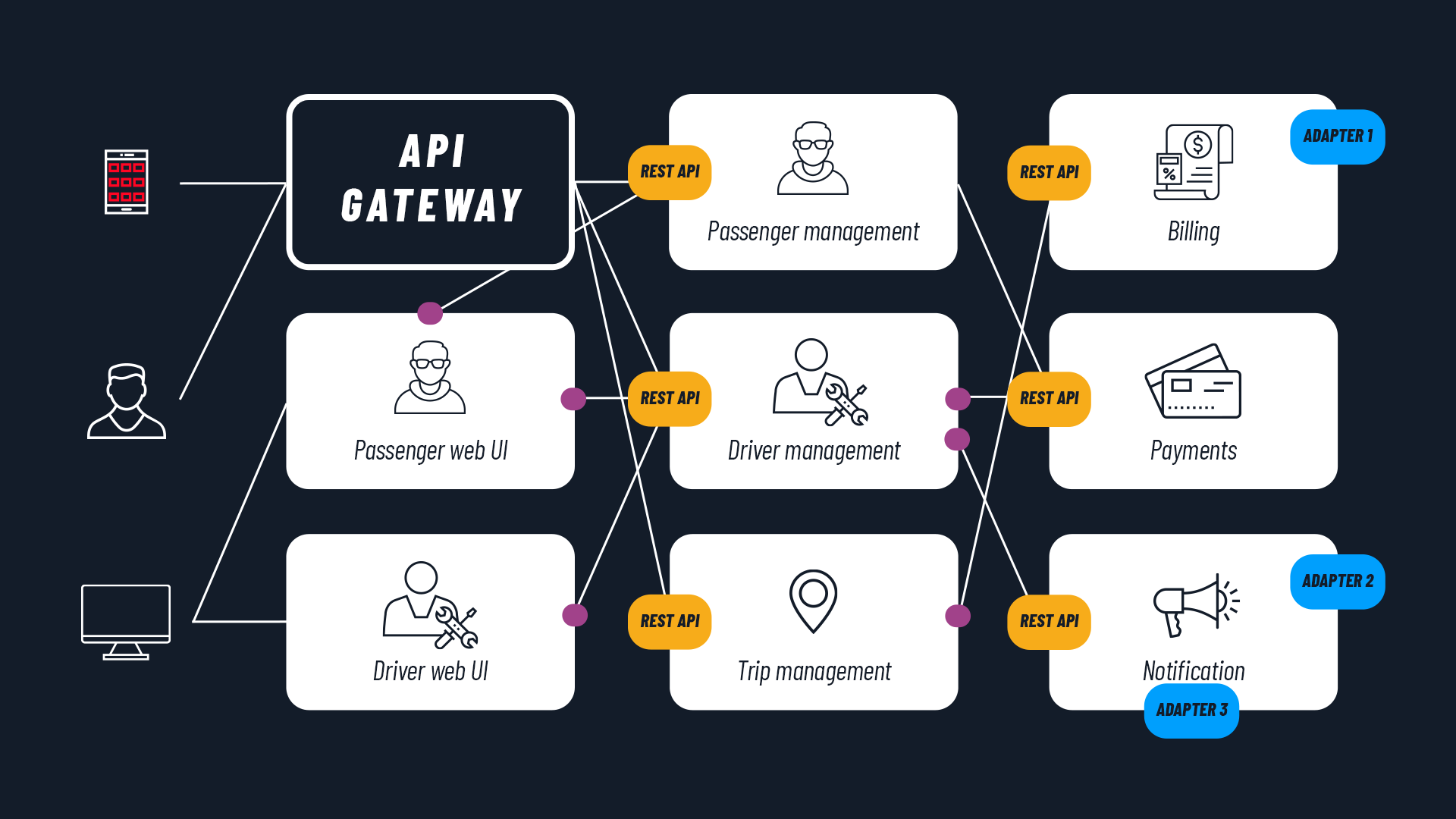

The migration process to a Cloud Native architecture is often based on the decomposition of monoliths and highly coupled applications into simpler components. The application is segmented both vertically (multi-tier architectures , containerization , microservices) and horizontally, through the use of middleware and hyperscalable services provided by the vendor. This ensures maximum flexibility and performance.

We therefore present a comparison between the two different development approaches , Cloud Native and traditional. We will highlight the benefits of application modernization by analyzing some key aspects. Here, to begin with, is a summary overview of the main differences:

Traditional applications

Cloud Native applications

Mutability and predictability

Not immutable, difficult to predict

Immutability and predictability

Operating system

Dependent on the operating system

Operating system abstraction

Resources and capacity

Capacity over-provisioning

Capacity utilization

Method

Silos

Collaborative

Development

Waterfall development

Continuous delivery

Dependency

Interdependent

Independent

Scalability

Manual scaling

Auto-scalability

Backup and recovery

Expensive and unreliable

Automatic

Expand

__

Traditional applications

Cloud Native applications

Mutability and predictability

Not immutable, difficult to predict

Immutability and predictability

Operating system

Dependent on the operating system

Operating system abstraction

Resources and capacity

Capacity over-provisioning

Capacity utilization

Method

Silos

Collaborative

Development

Waterfall development

Continuous delivery

Dependency

Interdependent

Independent

Scalability

Manual scaling

Auto-scalability

Backup and recovery

Expensive and unreliable

Automatic

Mutability and predictability

Cloud Native approach: immutability and predictability

Cloud Native applications are designed to operate within immutable infrastructures, meaning infrastructures that do not change after deployment.

Containers encapsulate the application and all its dependencies , allowing it to function as a self-contained package. Containers are deployed on orchestration systems such as Kubernetes , capable of providing automatic scalability and self-healing strategies.

The content of the package, including service configuration, can only be modified by performing a new deployment, and consequently ensuring the entire QA and automated testing pipeline, guaranteeing that what goes live always meets expected behaviors.

Traditional approach: not immutable, difficult to predict

Traditional applications are often designed separately from the infrastructure on which they will be deployed.

Deploying these applications often requires many manual interventions (for configuration and dependency management) that are performed directly on the production environment and are not part of an automated QA pipeline. The costs of individual deployments tend to increase, while reliability decreases and it becomes more likely to bring errors or malfunctions into production that require rapid interventions and manual patches.

It becomes a vicious circle and it becomes extremely difficult and expensive to scale the application , as well as to create test environments for testing service modifications.

Operating system

Cloud Native approach: operating system abstraction

A Cloud Native application architecture always relies on some solution that abstracts the environment required by the application from the underlying infrastructure.

Instead of configuring, updating, and maintaining operating systems, with all the complexities this entails, especially in production environments, the team focuses on its own software and what it needs to run.

There are many solutions at many levels, both on-premise and vendor-provided. For example Kubernetes , Amazon ECS or Google Cloud Run are different solutions, but they share the goal of decoupling application and operating system.

Traditional approach: dependent on the operating system

The architecture of traditional applications often heavily depends on the underlying operating system , as well as on hardware, storage, and support services.

These dependencies make migrating and scaling the application on a new infrastructure complex and risky , not to mention the difficulty of aligning development, testing, and operations environments to ensure identical behavior across all of them.

“It works on my machine” is a typical phrase heard in conversations between developers and operators in companies that adopt traditional models, and it is a symptom of these issues.

Resources and capacity

Cloud Native approach: capacity utilization

A Cloud Native application automates provisioning and infrastructure configuration, dynamically reallocating resources based on current needs. Building applications on Cloud runtimes enables immediate optimization of scalability, local resource utilization, process orchestration across available global resources, and error recovery to minimize downtime.

Resource management logic is configurable directly at the application level, so that assessments on this front can be made already during the design and development phase, rather than being imposed downstream by operators who have no knowledge of the application logic.

Traditional approach: capacity over-provisioning

Traditionally, the infrastructure solution is designed and sized based on load estimates , assumptions about the nature and demands of the application in the worst-case scenario, and the technical characteristics of the selected facility.

Long and often irrelevant testing and stress test phases on the prepared infrastructure must precede the application’s production launch, introducing delays and possible rework. The solution is often over-provisioned based on contingencies that account for maximum load peaks, thus implying fixed costs even during the frequent periods of low/medium load and with no guarantee of being able to scale in case the maximum load forecasts were underestimated.

Method

Cloud Native approach: collaborative

Cloud Native supports DevOps practices, which combine people, processes, and tools, promoting close collaboration between development and operations functions, to accelerate and facilitate the deployment of code to production.

TO LEARN MORE: What is DevOps and how to introduce it

Streamlined deployment processes imply greater speed and lower costs , making it possible to perform more deployments, more frequently. This configuration facilitates frequent releases of new features and roll-forward strategies in case of issues.

Traditional approach: silos

The traditional approach involves an over-the-wall handoff of the final application code from developers to operations.

System administrators find themselves operating applications they know little or nothing about, on infrastructures that developers know little or nothing about. Organizational priorities take precedence over the value delivered to the end user , resulting in internal conflicts, slow and compromised deliveries, and low staff morale.

Development

Cloud Native approach: continuous delivery

DevOps teams make individual software updates available for release as soon as they are ready and validated by the business.

Organizations that release software receive feedback more quickly and can respond more effectively and efficiently to market needs.

Continuous delivery is implemented through the automation of testing and QA and the reduction of deployment costs and time, which together enable small, frequent releases with a high probability of success.

Traditional approach: waterfall development

Software is released periodically, typically weeks or months apart, although many of the new features were often ready long before the release and had no particular dependencies.

The features that customers want arrive late and the company loses opportunities to compete , acquire customers, and increase revenue. The adoption curve by users is slower because they receive many new features all at once and struggle to provide feedback or learn how to use entire new parts of the application.

Larger releases imply longer testing times and lower probability of success , with more complexity to manage in case of corner cases or unforeseen issues.

Dependency

Cloud Native approach: independent

Microservices architecture breaks down applications into small, vertical , modular , independent , and loosely coupled components.

The development of different components is assigned to smaller, independent teams , which makes frequent and unconstrained updates possible, along with more granular scalability and failover recovery capabilities without impacting other services.

With this configuration, teams must define clear communication interfaces between services. This requires architectural analysis and promotes a clear understanding of application workflows, making the operation of individual components transparent and documented. In turn, this formalism facilitates the reuse of well-documented components by other involved vendors or other business units.

Traditional approach: interdependent

Monolithic architectures bundle many disparate services into a single deployment package , effectively making services interdependent and undermining agility in both development and deployment phases.

What happens inside the monolith is opaque and too often undocumented. Onboarding new developers is difficult : the code of a complex application is hard to read and reveals “how” the application works, but not “why”.

This knowledge therefore risks having to be rebuilt from scratch on the next project and much budget is invested in reconstructing components that already exist , starting from domain analysis and with the risk of creating misalignments.

Scalability

Cloud Native approach: auto-scalability

Infrastructure automation at scale eliminates downtime caused by human error.

While people tend to make mistakes when overloaded and forced into repetitive tasks, automation does not suffer from these problems, faithfully replicating the same procedures regardless of the size of the deployment infrastructure.

Although traditional operations have already embraced, in the most virtuous cases, provisioning automation systems (for VMs or physical machines), Cloud Native pushes this concept even further: a truly Cloud Native architecture addresses the automation of entire systems , not just provisioning.

Traditional approach: manual scaling

Traditional infrastructure management requires human operators to manually create and manage servers, networks, and storage volumes.

At scale, correctly diagnosing problems is a very complex and difficult job for human operators. Manually scaling a complex service can become a titanic task for a team of system administrators entrusted with responsibility for every service level, from hardware health to correct application-level configuration.

The repetitiveness inherent in these exercises increases the risk of error and it is not uncommon to discover that the source of a malfunction was a service that was not updated among a pool of other services.

Backup and recovery

Cloud Native approach: automatic backup and recovery

A container architecture makes failover recovery incredibly easy. Even in the case of a large-scale problem, individual containers, immutable and self-contained, can be automatically deployed with the same delivery procedures, in another data center.

Volumes (always external to containers) and storage services such as databases and CDNs are easily made redundant with multi-zone and even multi-vendor strategies, and backups are generally configurable as an additional service on the major public Clouds. Data durability in the Cloud is ultimately far higher than any single physical device.

Traditional approach: expensive and unreliable backup and recovery

Most traditional architectures suffer from a lack of automated backup, disaster recovery, or business continuity concepts.

To ensure effective disaster recovery, staff should promulgate strict policies and procedures at all levels (from development teams to operators), frequently review and test the quality and completeness of backup archives, ensure that recovery automation works, and guarantee data distribution that accounts for potential large-scale local problems (fires, collapses, serious electrical issues, etc.).

Unfortunately, the cost of such procedures makes it almost certain that, when a real problem occurs, procedures are outdated or incorrect, backups are incomplete or outdated, documentation is missing , and key personnel are unprepared.

3 advantages of Cloud Native applications

From the comparison, it clearly emerges that Cloud Native architecture contributes to:

- increasing the flexibility of applications, eliminating dependencies between services and with the infrastructure;

- accelerating the response capability of developers, who can work on individual microservices without intervening on the entire package;

- optimizing resource utilization thanks to automation , reducing human error.

This is why companies should immediately embark on the path of migration to the new Cloud Native paradigms , in order to reap the greatest benefits from the new hybrid, heterogeneous, and distributed infrastructures.