Containerization spread with Docker between 2012 and 2013. Since then, containers have made application portability and deployment activities easier and more secure. Let’s explore the most important aspects surrounding the concept of containers and try to answer a question: do containers represent the future?

What is containerization?

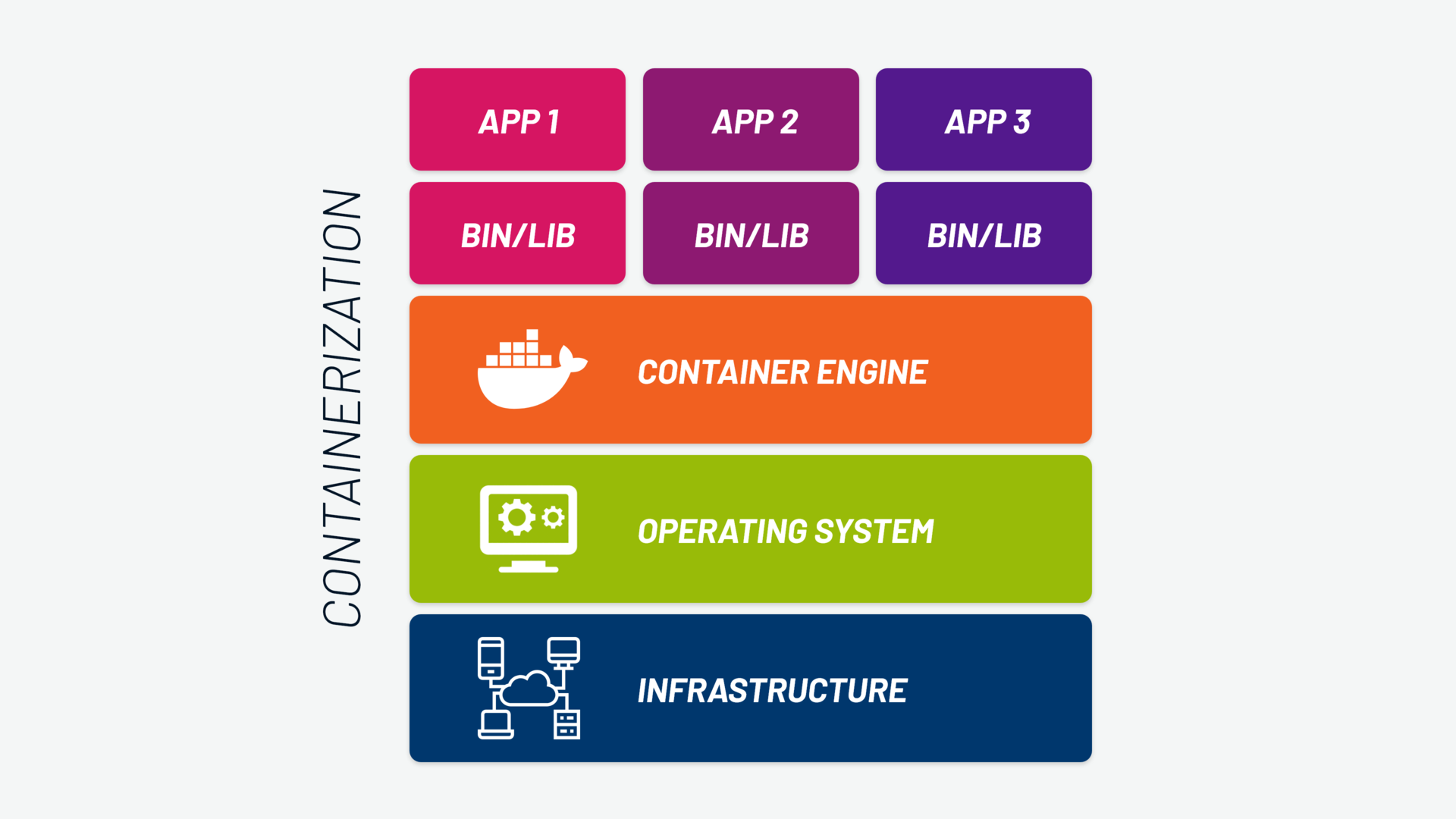

Containerization consists of bundling software code and its required components — such as libraries, frameworks and other dependencies — into an isolated package known as a “container”. Using containers, software can be run reliably and predictably on any system that supports container technology.

We can define a container as a virtual package. A logical structure that encapsulates application code and all the components necessary for it to function. This way, the container can operate in different execution environments and be easily transported from one environment to another.

What is containerization used for?

Containerization enables development teams to move with agility, perform software deployment efficiently and operate at an unprecedented scale.

To understand containerization, we need to take a step back. Traditional software is installed and run within a platform that provides all the dependencies our application needs to execute. The dependencies we’re talking about are themselves software, in specific release versions, which makes the portability of the whole package very complex.

If we also add the underlying layer to the equation, namely the operating system, which provides a specific method of software installation, ensuring the correct functioning of the code becomes extremely difficult, regardless of the environment in which it is executed.

That’s why, by isolating software components within containers, the deployment process becomes faster and more reliable, allowing teams to efficiently manage large volumes of workloads.

How does containerization work?

The container logic overcomes the limitations of traditional software. Containers hold the entire packaged application (“packaging of software”), with all the components necessary for it to function: the application’s binary code (or the code that needs to be interpreted), runtime components such as libraries, dependencies, configuration files and any system tools.

This way, containerized apps — applications encapsulated in containers — can be quickly moved to other platforms and bare metal or cloud infrastructures. As a result, containerization makes it simpler and faster to deploy applications.

READ ALSO: Container security: how to achieve greater security for your containers

The birth of containerization

Containers were born as an attempt to “isolate”, with primary objectives of security and stability. Some years ago, efforts were made to separate applications or workloads from the rest of the system, to achieve a form of “containment”.

In the first “container-like” approaches, the kernel had to allow the execution of multiple instances of “user space”. Each instance, separated from the others, constituted an isolated container, dedicated to a single application or workload.

The first examples of this approach were FreeBSD “jails” and workload partitioning on IBM’s AIX. Numerous variants were devised (from DragonFly BSD’s “virtual kernels” to OpenVZ’s “virtual private servers” to Solaris “zones”), but these were tied to specific hardware architectures and operating systems, unlike modern containers.

The idea of containers and containerized applications as we understand them today dates back to 2013. Containers were introduced with the creation of Docker, its Docker Engine, and the launch of the Open Container Initiative, also created by Docker among others. This latter project generated the industry standard in the sector and made it possible to use different containerization tools on different platforms, always using the same containers.

Read also: What is container orchestration and how to do it with Kubernetes

Containerization: what are the benefits?

As we’ve seen, containerizing an application results in more efficient and faster execution across different environments, improving workflows and productivity. Let’s look in detail at the advantages and their direct consequences.

1. Application portability and flexibility

A container can be moved from one platform to another without issues, because it carries with it all the dependencies needed for its execution.

The fact that it can be written once and run in different environments without the need for reconfiguration generates many other positive consequences. It allows, for example, having the same environment for development on a laptop and for execution in a cloud cluster. This also ensures that the application’s behavior in our local development environment is identical in release environments as well.

An additional advantage comes from reduced vendor lock-in, since the portability achieved allows you to switch cloud infrastructure providers and move your workloads without having to rewrite applications.

In general, with containers, flexibility increases, the deployment phase becomes simpler, and the release and update of applications and software products is faster. It is thus possible to get the most out of software investments, whether they are existing resources or new opportunities offered by the market.

2. Lightweight nature

A second benefit of containers is their lightweight nature, particularly compared to traditional virtual machines.

Unlike a virtual machine that contains its own operating system with all the complexities of maintenance and heaviness of execution, the container leverages an approach based on sharing the OS kernel of the same host operating system (typically Linux). It can therefore contain nothing more than an app and its own execution environment.

For this reason, while VMs are used for monolithic and traditional IT architectures and are measured in gigabytes, containers — which tend to be significantly more compact — are used differently. They are indeed compatible with DevOps, cloud and CI/CD technologies. This concept leads us straight to the third benefit.

3. Modernity and scalability

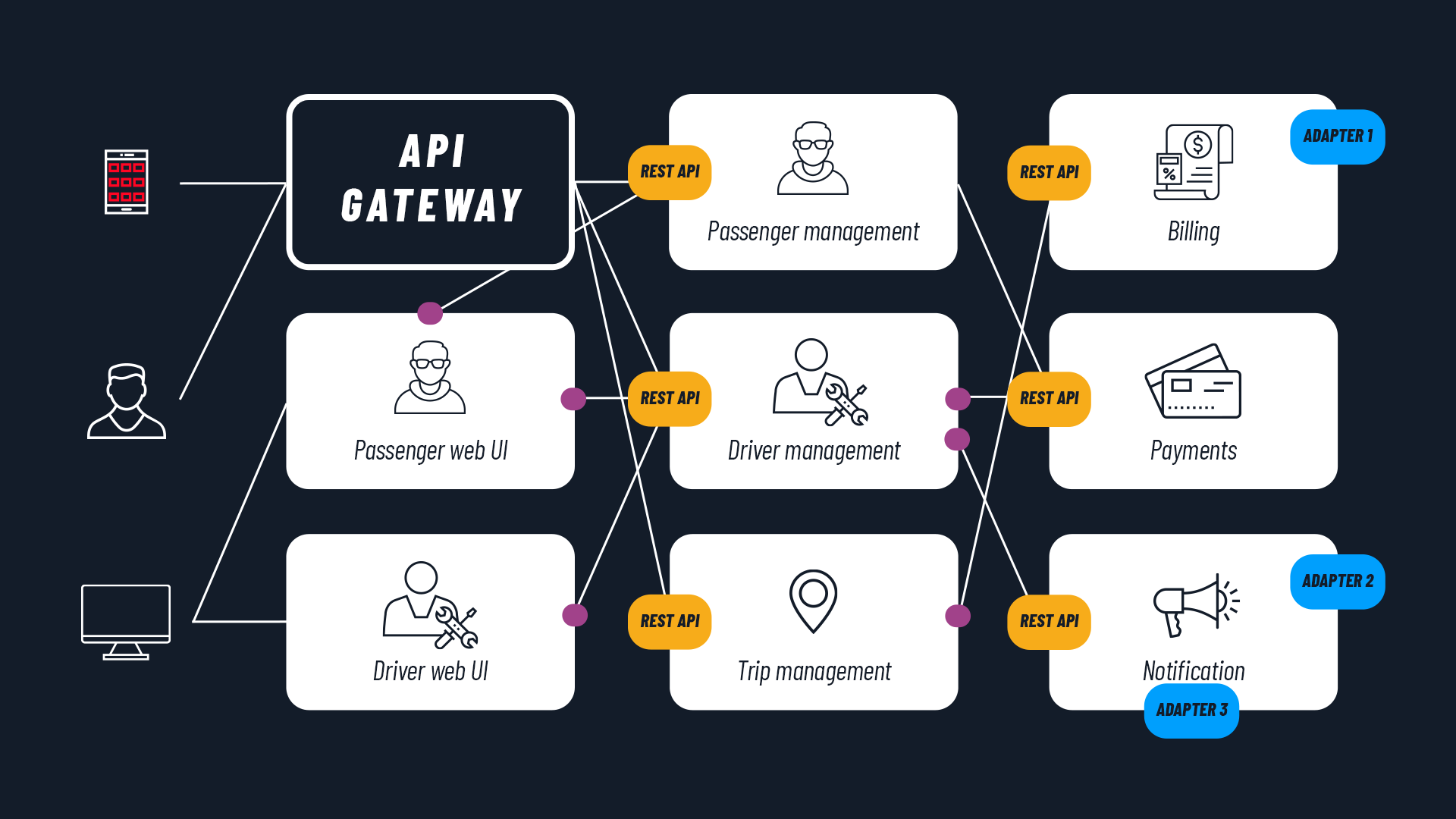

Containers are not only lightweight and portable; they also represent an adequate response to modern paradigms of application development in the DevOps space, for serverless systems and microservices.

Startup times are shorter. It is possible to improve memory and processor usage on the physical machine. The development environment is consistent with the production environment. Cloud Native application support is better. All of this also translates into greater horizontal scalability.

In general, containers are ideal for creating microservices architectures. This is because microservice architectures can be implemented and scaled with greater granularity compared to traditional monolithic applications.

Containerization: what are the challenges?

The main disadvantage of containers stems from the relative novelty of the technology, which is constantly evolving.

Although since 2013 container adoption has seen strong acceleration and developers can choose from different tools on different platforms, there are often ongoing changes and some versions are less stable than others.

This is especially true when containerization technologies need to be ported to different processor architectures. This is the case with the migration to Arm architecture processors, for example, from those with x86 architecture, which has taken time and is still not perfect.

Another form of lock-in depends on cases where an application written for a specific operating system needs to be containerized, while the containers used in the organization run on a different operating system. Typically this happens when a Windows application needs to be containerized in a Linux environment (or vice versa, although this is rarer).

It is possible to solve the problem by adding a compatibility layer, or by using an approach like the “Nested virtual machine” (a VM contained within another VM, which allows using a different operating system). However, these are solutions that add load to the physical server and increase complexity.

Finally, a relatively new technology also requires new working methodologies and new skills, which take time to spread among IT professionals. This delay makes it harder to find well-prepared system administrators.

Which applications are suitable for containerization?

Legacy applications

The first approach to containerization is to put legacy applications in containers with the goal of modernizing them. This is the first step in migrating systems that reside on on-premises servers to the cloud.

In this specific case, the advantage of containers lies not only in portability. It also lies in the transformation of monolithic applications into applications with a microservices-based architecture.

Modernizing legacy applications is therefore one of the reasons for adopting container logic. As a consequence, modernization will not only concern technologies (from monoliths on VMs to microservices on containers). With containerized applications, new working methodologies can be adopted, moving from the waterfall approach to the more modern Agile and DevOps approaches.

Applications running in hybrid cloud and multi-cloud environments

Companies that want to operate on a mix of proprietary data centers (on-premises) and one or more public clouds benefit from containerizing their applications. Thanks to container usage, their applications can be run consistently anywhere: from the developer’s laptop to on-premises servers to public cloud environments from different providers.

This consistency of execution enables continuous integration and continuous deployment (CI/CD) models, typical of DevOps environments.

Applications based on a microservices architecture

The creation of new applications based on a microservices architecture, where the application is composed of many small, highly decoupled and independently deployable services, finds its natural complement in the use of containers.

Containers and microservices work well together because containerizing microservices gives them portability, compatibility and scalability.

Containerization vs virtualization: what are the differences?

Like containerization, virtualization is one of the most widely used mechanisms for hosting applications in a computing system. While containerization uses the concept of a container, virtualization uses the notion of a virtual machine (VM) as its fundamental unit. Both technologies play a crucial role, but they are distinct concepts.

Virtualization helps us create software or virtual versions of a computing resource. These computing resources can include processing devices, storage, networks, servers or even applications.

We can see containerization as a lightweight alternative to virtualization. Container logic allows you to encapsulate an application in a container with its own operating environment. This way, instead of installing an operating system for each virtual machine, containers use the host’s operating system.

We explored the concept of virtualization in depth in this article: What is container orchestration and how to do it with Kubernetes .

Why are containers the future?

There are no absolutely “future-proof” architectures and technologies, only relatively so. With that premise, can we say that containers are the future?

Yes, especially in contexts with a growing degree of complexity. Containerization technology represents a resource for facing the future challenges of the IT landscape.

To conclude, let’s review the reasons why containerizing applications is a forward-looking choice:

Containers have by now replaced hypervisors and traditional virtualization in many areas. Their portability, replicability, compatibility and scalability make them the ideal tool to fully leverage the benefits of cloud computing, public cloud, hybrid and multi-cloud environments.

Containers are also ideal for the rapid development of on-premises applications that can then be used on different hardware.

Containers are the technology used for developing microservices-based architectures, with CI/CD development models based on the DevOps culture and GitOps approach.

Furthermore, containers are the right technology to choose for large-scale projects with growing complexity. Technologies and methodologies have been designed to master such complexity. First and foremost is container orchestration, which allows managing large volumes of containers throughout their entire lifecycle.

Finally, containers are a modern technology that has reached a good level of maturity and stability. They are used both for modernizing legacy applications and for creating new applications based on Cloud Native paradigms and architectures.