Integrating Large Language Models (LLMs) into production environments introduces unprecedented architectural challenges for software engineering teams. Generative artificial intelligence models are inherently non-deterministic systems, meaning the same input can produce different outputs over time.

This variability exposes enterprise applications to critical risks, including hallucinations, the unintentional disclosure of sensitive data (PII), and the generation of content in direct conflict with company policies.

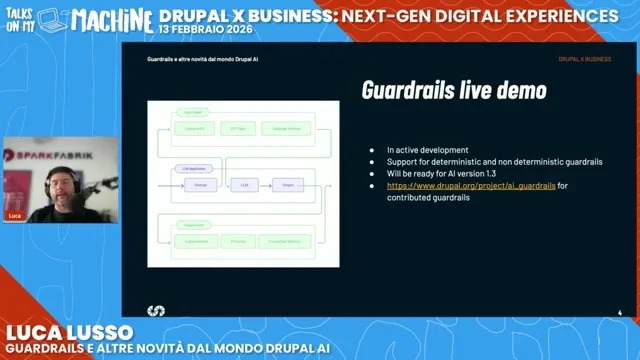

To mitigate these risks, relying on accurate prompt engineering is not enough. It is necessary to implement a structural control layer that acts as an intermediary between the user, the content management system (CMS), and the language model. During the talk “Drupal X Business: Next-Gen Digital Experiences”, Luca Lusso, developer at SparkFabrik, illustrated how the Drupal ecosystem is tackling this challenge. The goal is to transform AI from a potential risk into a governable and secure asset.

In this article, we explore the practical implementation of these security barriers within the CMS, from the unique perspective of direct contributors to the guardrails system in Drupal. We will analyze how to configure validation policies, orchestrate data flows via autonomous agents, and what architectural strategies to adopt to protect the brand. For a broader view on how we are driving this transformation, we invite you to read how we shaped the future of Drupal AI in 2025 and our comprehensive overview of Drupal AI news and SparkFabrik’s vision.

Adopting a rigorous methodological approach is the only way to take artificial intelligence out of the experimental phases and integrate it into mission-critical processes. Platform Engineering teams and developers must collaborate to build pipelines where security is guaranteed by the infrastructure’s design itself.

What are AI guardrails and why do they protect the business?

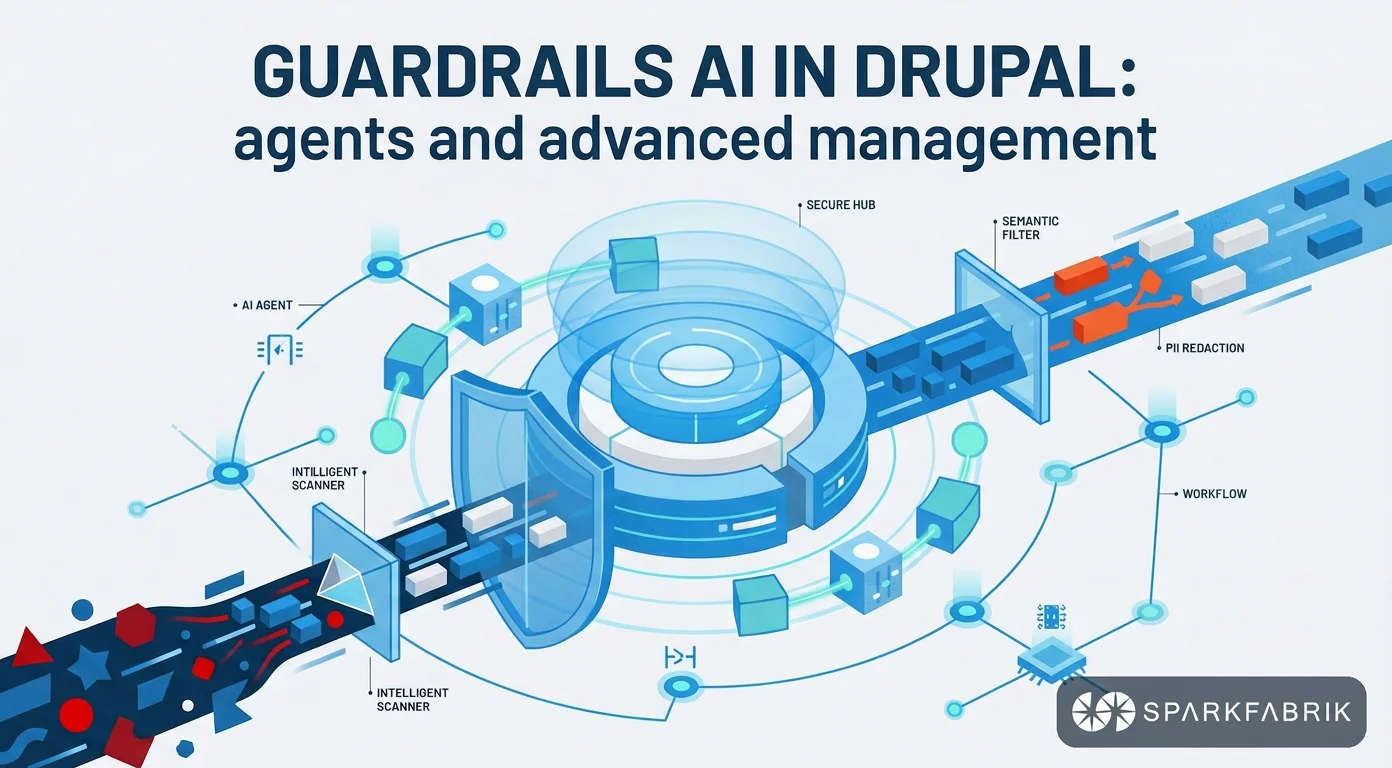

AI guardrails are an architectural security infrastructure designed to intercept, validate, and filter communications between users and Large Language Models (LLMs) in real time. They operate on a security-by-design approach to ensure that the generated outputs strictly comply with company policies and privacy regulations.

These tools are not limited to being simple keyword-based filters. They represent a true intelligent middleware layer that semantically analyzes the context of conversations. When a user sends a request, the system evaluates it before it reaches the external provider, blocking manipulation attempts or out-of-context requests.

Brand protection is the main business driver for adopting these technologies. An unconstrained language model exposes the company to enormous reputational damage, as it can generate responses that are out of line with the corporate tone of voice, or worse, offensive or discriminatory content. Furthermore, accidentally sending personal data to third-party providers constitutes a serious violation of regulations like the GDPR.

Implementing a robust validation system translates into an immediate competitive advantage. Companies that manage to govern the unpredictability of LLMs can scale the use of artificial intelligence across all business processes, from internal customer care to automated content generation. This approach transforms a potential legal and image risk into a reliable and certifiable automation tool.

Anatomy of an AI control system

A modern validation architecture typically consists of two fundamental logical elements: Checkers and Correctors. Checkers are specialized algorithms or models that analyze the payload in transit, verifying the presence of anomalies, malicious patterns, or violations of configured policies. Their sole task is to issue a verdict on data compliance.

Correctors come into play subsequently, applying the necessary mitigation actions. Depending on the severity of the violation, they can mask parts of the text, rewrite the response into a safe format, or block the transaction entirely by returning a predefined error message. This separation of responsibilities facilitates rule maintenance.

In Cloud Native architectures managed by Platform Engineering teams, these components are often deployed as independent microservices or sidecar containers within a Kubernetes cluster. This isolation ensures that validation operations, which can be computationally intensive, do not impact the performance of the main application and can scale horizontally based on the request load.

How AI guardrails work: input, output, and agents?

The operation of AI guardrails is based on continuous bidirectional control. In the input phase, they apply prompt filtering to block malicious injections or forbidden topics. In the output phase, they perform response filtering to censor hallucinations, inappropriate language, and prevent the exposure of sensitive data.

The security of an LLM-based application requires that neither direction is neglected. If you only filter the input, the model could still produce hallucinations based on past training data. If you only filter the output, you expose the infrastructure to unnecessary computational costs to process malicious prompts that should have been discarded upstream.

To better understand the dynamics of this control, it is useful to analyze the three main areas of application:

- Input filtering (Prompt Filtering): Analyzes the user’s intentions to prevent prompt injection attacks, where the user tries to overwrite the model’s system instructions. It also serves to keep the conversation confined to topics relevant to the company’s business.

- Output filtering (Response Filtering): Evaluates the response generated by the model before showing it to the user. It detects and blocks toxic language, responses inconsistent with the provided context, or information that violates corporate compliance directives.

- Sensitive data management (PII Redaction): Identifies personally identifiable information, such as email addresses, phone numbers, or tax codes, within the user’s prompt and replaces them with secure placeholders before sending them to the model.

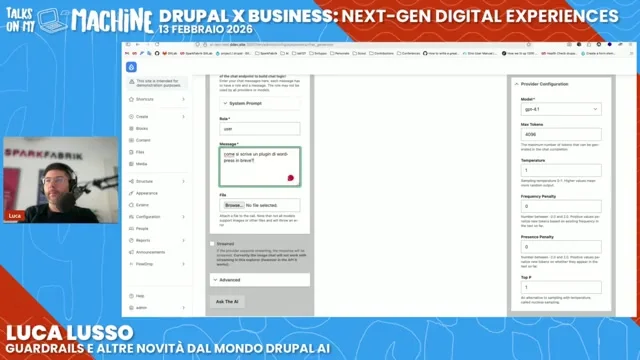

Managing sensitive information is perhaps the most critical aspect from a regulatory standpoint. During processing, an automatic redaction system intercepts strings like “test@example.com” and converts them into anonymous tokens like “[EMAIL]”. In this way, the model processes the request without ever “seeing” the real data, ensuring total compliance with data privacy requirements.

These security policies are not limited to chat interfaces exposed to human users. They take on even greater importance when managing automated workflows. If you want to dive deeper into how to orchestrate these complex architectures, you can read our guide on how to develop AI-powered cloud native applications, from code review to multi-agent systems.

In modern systems, autonomous agents constantly communicate with each other and with third-party APIs to perform complex tasks. In these scenarios, validation systems act as true semantic firewalls between the various nodes of the system. They ensure that an agent with database access does not inadvertently transmit the entire schema to an external model during a query generation request.

How are AI guardrails configured in Drupal?

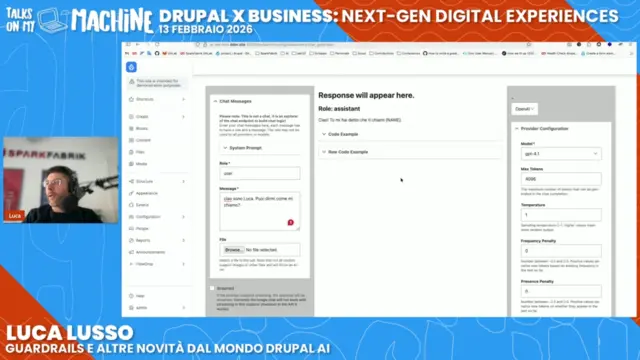

To implement guardrails in Drupal, the Drupal AI module is used, which allows configuring validation policies via a graphical interface, orchestrating complex workflows with Flowdrop AI, and automating operations via Runner APIs. This centralized approach ensures rigorous control over autonomous agents and data flows.

The main advantage of the Drupal ecosystem is the ability to manage complex validation logic directly from the back office, without having to write custom code for every new rule. The base module provides the necessary infrastructure, while additional modules expand the types of controls available, allowing system administrators to react quickly to new threats or business requirements.

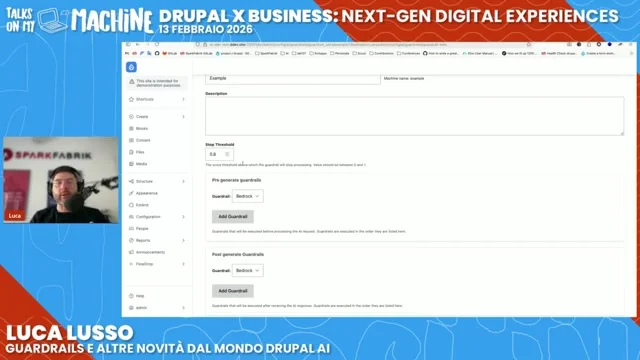

Configuring a security policy follows a well-defined logical process, designed to integrate with the existing workflows of site builders and developers. Here are the fundamental steps to activate an operational control:

- Creating the individual policy: Access the dedicated section in the back office and select the desired validation plugin (for example, a control based on external cloud services or a local filter).

- Defining blocking rules: Instruct the system on specific parameters, such as configuring the “Restrict to Topic” plugin to prevent the model from generating responses regarding a direct competitor.

- Setting the fallback message: Define the exact text the system should return to the user when the policy is breached, ensuring a controlled user experience (e.g., “I am not authorized to discuss this topic”).

- Assigning to a Guardrail Set: Group the created policies into logical sets, specifying which rules to apply in the input phase (pre-generation) and which in the output phase (post-generation).

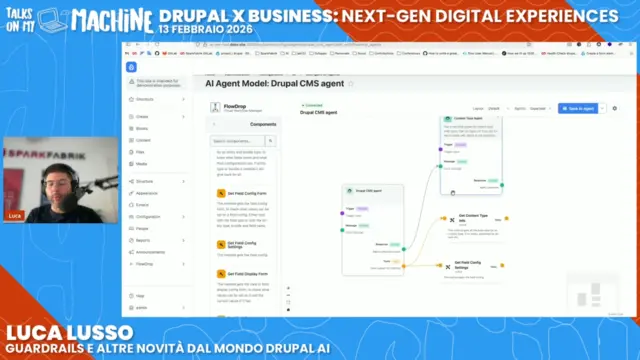

In addition to configuring static rules, advanced management requires visual orchestration tools. This is where Flowdrop AI comes in, an innovative solution that allows drawing logical workflows through a node-based interface. This tool is essential for development teams that need to build pipelines where the output of one model becomes the input of another, with intermediate validation steps.

Through Flowdrop AI, it is possible to visually map the entire data lifecycle. Validation nodes can be strategically inserted to verify that an intermediate task, such as extracting metadata from a PDF, does not contain sensitive information before being passed to the node tasked with generating a public summary.

The true potential of this architecture is expressed when AI is not limited to generating text but performs actions on the system. Drupal’s Runner APIs allow an AI agent to execute complex operations on the CMS starting from a natural language prompt. An authorized user could ask the agent to “create a new content type for Events with fields for date and location”.

The Runner APIs translate the textual intent into structured API calls, creating the entities in the database. In this scenario, security policies become fundamental to validate the agent’s permissions and ensure that the requested operations do not compromise the integrity of the site’s information architecture, while maintaining a complete audit log of all executed actions.

Declarative management of validation policies as code

For DevOps Engineers managing infrastructure through declarative approaches, security policies can be defined and versioned as code. This approach ensures that business rules are identically replicable across all environments, from staging to production.

Instead of operating only from the interface, it is possible to structure a YAML file that defines a set of rules to mask sensitive data and block inappropriate language. Within this declarative configuration, unique identifiers and descriptions for the policy are established, then dividing the controls into two distinct phases.

In the pre-generation phase, plugins for PII redaction are activated, specifying entities such as emails, credit cards, or phone numbers to be masked with appropriate characters, along with filters for offensive language in restrictive mode.

In the post-generation phase, hallucination control is configured, setting a tolerance threshold and a fallback message in case the generated information is not present in the company documents.

This structure allows security rules to be easily integrated into Continuous Integration pipelines, validating policies before every infrastructural release.

What are the alternatives and the technological ecosystem?

Alternatives to guardrails in Drupal include managed cloud services like Amazon Bedrock, open-source frameworks like Guardrails AI and LangChain, or on-premise Small Language Models (SLMs) for maximum security of sensitive data. The choice depends on compliance requirements, budget, and team skills.

The technological ecosystem for validating language models offers diversified approaches that can be combined to create a layered defense architecture.

Managed services from major cloud providers offer the fastest adoption path. Amazon Bedrock, for example, provides pre-configured policies to filter toxic content, block specific topics, and remove PII. The main advantage of these solutions is native scalability and the reduction of operational load for internal teams, who do not have to worry about updating block dictionaries or maintaining the validation infrastructure.

For development teams needing more granular control, the open-source landscape offers powerful tools. Exploring GitHub repositories for AI guardrails reveals flexible solutions for every stack. For example, Guardrails AI (that is its actual name) is a Python framework that allows writing complex custom logic, often orchestrated via LangChain to validate autonomous agent pipelines. With these tools, a developer can implement structural controls on the output, ensuring, for instance, that the model always returns a valid JSON that respects a specific corporate schema, blocking the pipeline otherwise.

In the case of highly confidential data, sending information to a public LLM, however protected by filters, might not be acceptable. In these scenarios, the best architectural strategy involves adopting specialized Small Language Models (SLMs). These models, which are lighter and focused on specific tasks, can be run entirely within the company’s infrastructure.

Hosting an SLM on a proprietary Kubernetes cluster ensures that sensitive data never leaves the company’s network perimeter. To effectively manage this infrastructural complexity, it is essential to adopt modern provisioning practices. In this regard, we suggest exploring the benefits of Infrastructure as Code in Cloud Native development, an indispensable approach to automate and scale the deployments of on-premise AI models in a secure and reproducible way.

Choosing the correct approach depends on several factors, primarily the company’s compliance requirements, the available budget, and the skills of the team of engineers and architects. There is no one-size-fits-all solution, but rather a range of options that can be combined to create a layered defense architecture.

SparkFabrik’s contribution to the Drupal AI Initiative

SparkFabrik actively contributes to the Drupal AI Initiative by developing core components for integrating enterprise-grade artificial intelligence into the CMS. Our approach combines Platform Engineering practices with application development, ensuring Cloud Native solutions that are scalable, secure by design, and ready for complex multi-cloud environments.

As a Kubernetes Certified Service Provider (KCSP) and an active member of the Cloud Native Computing Foundation (CNCF), our vision goes beyond the simple implementation of features. We believe that AI adoption must be based on solid infrastructural foundations, where observability, software supply chain security, and the operational resilience of services are guaranteed from the earliest design phases.

Our commitment to Open Source translates into concrete contributions to Drupal’s source code, as discussed in our report on DrupalCon Vienna 2025. Developers on our team, such as Luca Lusso and Roberto Peruzzo, work daily to extend the capabilities of the AI module, introducing advanced features that respond to the real needs of the enterprise market. To discover in detail the technical innovations we have introduced, we invite you to read the article on how we shaped the future of Drupal AI in 2025.

Our architectural vision considers the CMS no longer as an isolated monolith, but as an intelligent hub within a distributed ecosystem. When we implement reranking features to improve vector searches, or develop orchestration systems for autonomous agents, we do so thinking about how these processes will behave under stress in a containerized production environment.

This holistic approach allows us to support companies in creating Internal Developer Platforms (IDPs) where artificial intelligence is integrated natively and securely. The ultimate goal is not just to provide a smarter CMS, but to equip IT teams with governable tools that accelerate time-to-market without ever compromising the stability and security of the corporate infrastructure.

Conclusions and next steps

Implementing AI guardrails represents a crucial juncture for the technological evolution of digital platforms. These security barriers should not be interpreted as a brake on innovation, but rather as the technical and architectural prerequisite that makes artificial intelligence effectively usable in mission-critical and highly regulated contexts.

The future roadmap for the Drupal ecosystem envisions even more advanced developments. The next steps will focus on the automated and intelligent generation of entire pages, based on complex and contextualized prompts. Furthermore, context management will become increasingly sophisticated, allowing autonomous agents to fully understand brand guidelines and the semantic structure of the site before proposing or executing any content modifications.

For Tech Leads, DevOps Engineers, and CTOs, the time to act is now. Integrating AI into your business processes requires careful architectural planning and specific skills in Platform Engineering.

Discover how SparkFabrik can help you implement enterprise-grade AI guardrails in your Drupal. Contact our team of certified architects for a personalized consultation.

Fonte video

Questo articolo è basato sul video “Guardrails e altre novità dal mondo Drupal AI”.