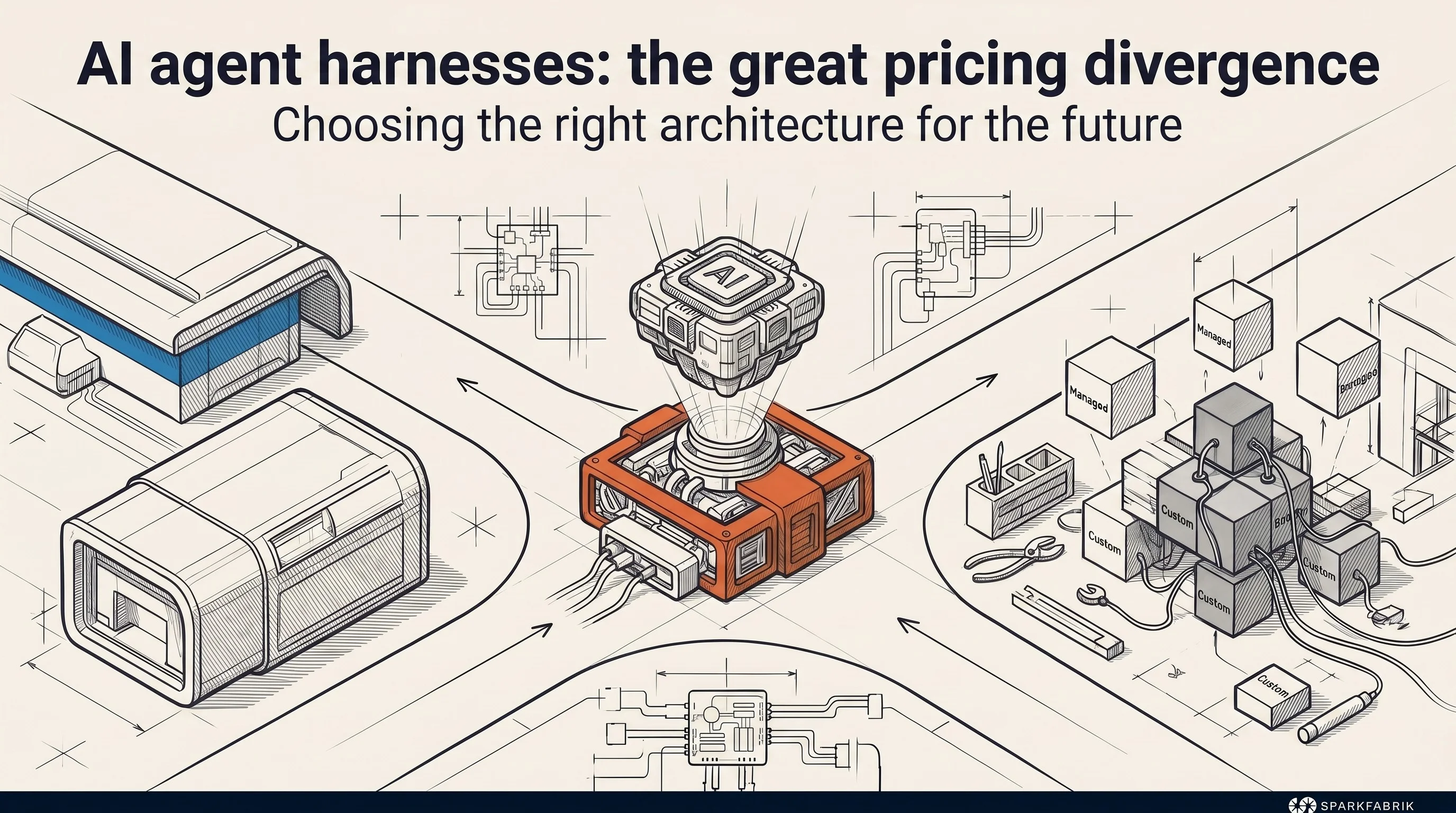

Imagine you have just purchased a powerful engine, the pinnacle of modern engineering. It is delivered to you on a wooden pallet, raw and bare. To use it on the road, you have to build the entire car from scratch. You will need to assemble the steering, brakes, fuel tank, and onboard electronics. Until recently, integrating an artificial intelligence model into an enterprise application worked exactly like this.

The model was the engine, but you had to build all the other necessary infrastructure to make it run yourself.

In our direct experience with cloud-native architectures, we have often seen emerging technologies redefine entire markets. Today, frontier model vendors are shifting the battlefield to orchestration infrastructure. The value has shifted from the intelligence of the model to the robustness of the harness that governs it.

A clear analysis by Janakiram MSV published on The New Stack highlights a crucial fact. The giants of artificial intelligence are offering this chassis with diametrically opposed business models. Anthropic rents it to you by the hour, as a turnkey service. OpenAI gives you the blueprints to build it, hoping you will use its engine exclusively.

This divergence is not a simple cloud pricing war. It is the exact moment that defines the new point of architectural lock-in for custom artificial intelligence software development. The choice between a managed service or an open-source SDK will not just impact the invoice. It will determine the portability, system flexibility, and technical debt that companies will have to manage for the next decade.

Let’s start with a practical example to understand how this infrastructure decision will radically change the way we develop software.

What is the harness and why has it become the real product

Defining a new standard

To understand the scale of this market clash, we must first demystify the object of the dispute. In short, a large language model (LLM), on its own, is limited to predicting the next word based on statistical calculation. It has no memory of past conversations, cannot query a corporate database, and cannot perform an action on external software.

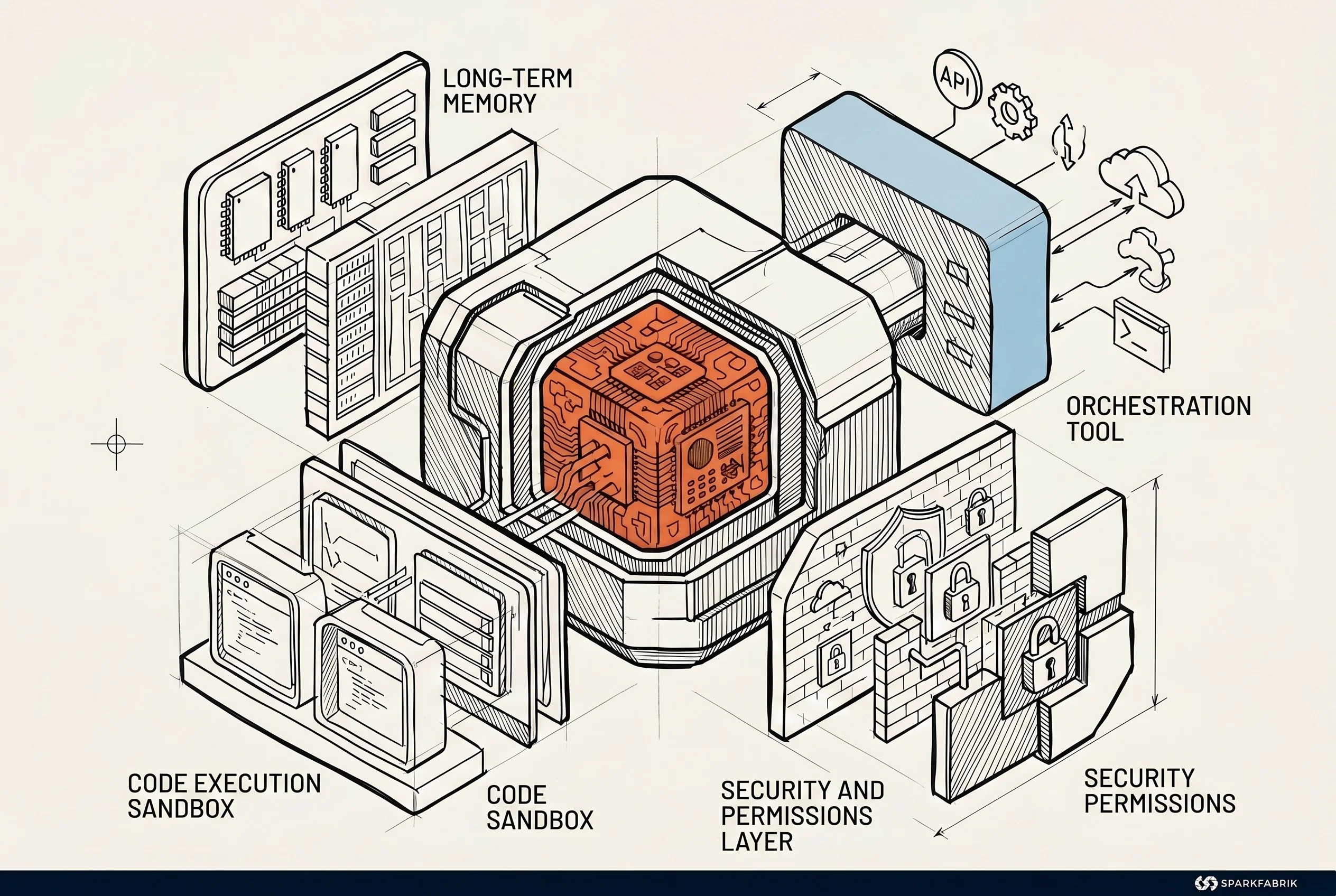

The harness is the middleware that bridges this gap. It is the code that takes the user’s request, retrieves historical context, queries the necessary APIs, packages everything for the model, and then translates the textual response into a concrete action on the system.

The term began to circulate heavily in February. At that time, OpenAI published a technical post on its blog describing how a small internal team had managed to release a complex 1-million-line-of-code system into production. The unique part? Literally zero lines were written by human hands.

Martin Fowler definitively canonized the concept in a long essay, defining the exact boundaries of this architecture:

Martin Fowler definitively canonized the concept in a long essay, defining the exact boundaries of this architecture:

“The harness is everything surrounding an AI model, except for the model itself. It is the vital management infrastructure that turns an unpredictable text generator into a software operator capable of performing tasks in production.”

The harness manages model invocation and long-term memory. It handles the orchestration of external tools and code execution in isolated environments (sandboxes). It also regulates security permissions and error recovery. Without a robust harness, an AI agent is useless.

The hidden cost of DIY solutions

For 18 months, starting from the explosion of generative AI, the entire market lived in a technological limbo. Cloud vendors and framework creators offered only partial, fragmented, and often incompatible components. If a company wanted to bring an AI agent into production, it was forced to do the dirty work. Internal teams had to assemble custom solutions by gluing together open-source parts.

Startups raised capital to sell pre-packaged versions of this infrastructure. The harness became a market precisely because the available pieces did not provide a clean, definitive answer to enterprise needs.

In our work accompanying companies, we have observed the direct consequences of this fragmentation. Building a proprietary governance layer creates bottlenecks. Teams must personally manage the differences between orchestration and choreography in complex systems. They spend months just ensuring that every component communicates securely and deterministically, diverting resources from actual product development.

Now that the harness has a name and a defined perimeter, model creators have decided to reclaim this space. And they are doing so by imposing radically different worldviews.

The market crossroads: the “all-inclusive” approach vs. open source

The managed service path

The fragmentation of the last eighteen months is about to end, replaced by sharp polarization. On one side, we have the fully managed service approach. On the other, we find the path of open infrastructure based on the bring-your-own-compute principle.

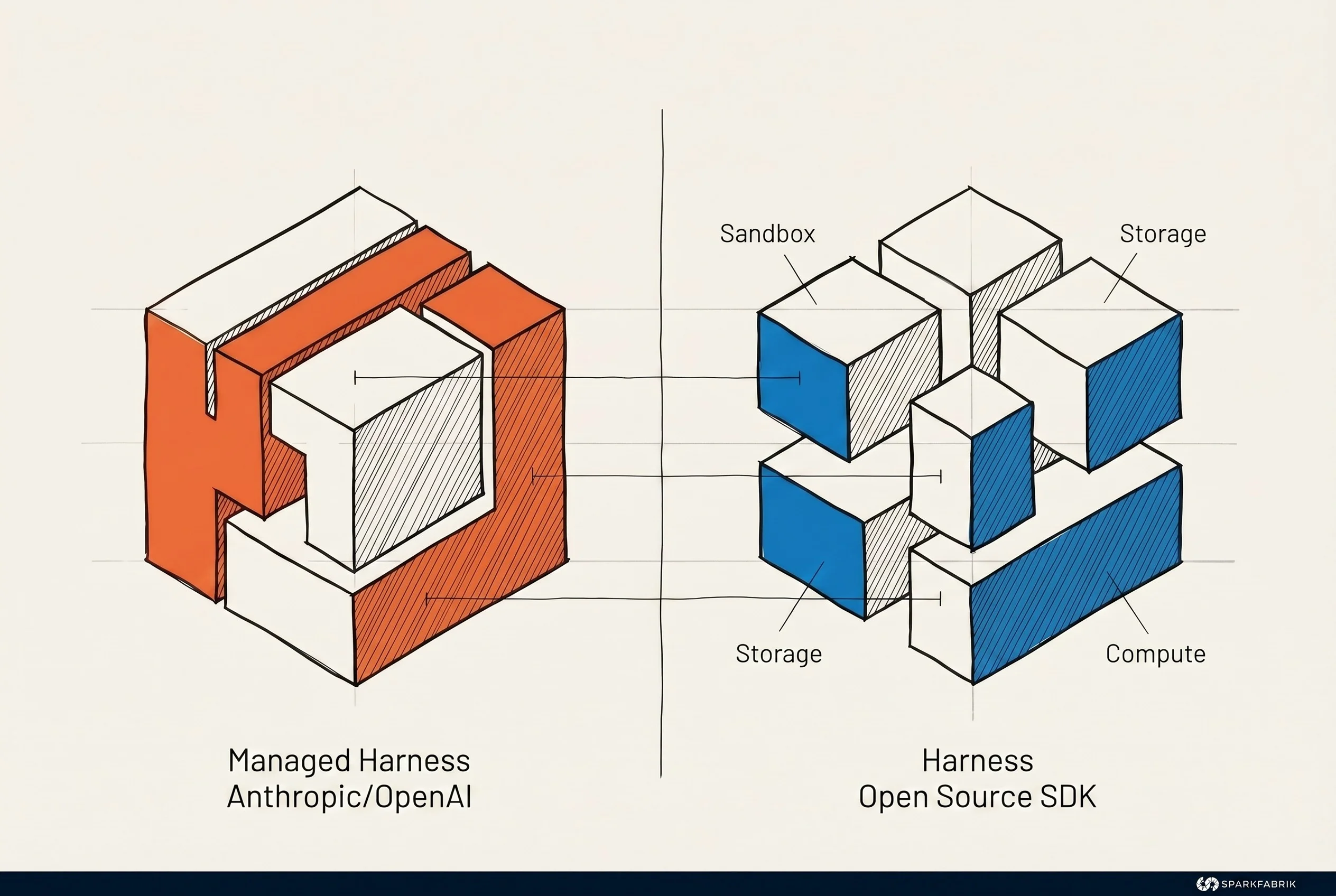

Anthropic blazed the first trail by launching its Managed Agents service. The promise is a maximum reduction in cognitive load for development teams. You define the agent, the tools it can use, and the security limits. Anthropic takes care of running the execution environment. They manage long sessions, sandbox code execution, permissions, and end-to-end tracking.

The billing model is direct: $0.08 per session hour, in addition to token consumption. This is not a sandbox or simple prototype rate. Early enterprise use cases demonstrate that Anthropic’s approach is ready for heavy production:

The billing model is direct: $0.08 per session hour, in addition to token consumption. This is not a sandbox or simple prototype rate. Early enterprise use cases demonstrate that Anthropic’s approach is ready for heavy production:

Notion uses these managed agents to perform dozens of delegation tasks in parallel.

Rakuten has deployed agents specialized in sales, marketing, finance, and human resources.

Sentry has built an agent that takes a reported bug and turns it into an open pull request, without any intermediate human intervention.

Asana has integrated it into its AI Teammates feature.

We are witnessing the definitive shift from “do-it-yourself” orchestration to the Managed Agents model. The real news is the operational convenience: Anthropic takes charge of the execution infrastructure (the managed harness), leaving companies only with the burden of business logic.

The open alternative and ownership costs

Seven days after Anthropic’s launch, OpenAI responded with a mirror-image move. It released an update to its Agents SDK, strictly open source. This tool includes a native harness for OpenAI models, offering configurable memory, orchestration, and filesystem tools.

The crucial difference is in the delivery and pricing model.

OpenAI does not run the computation for you and does not apply any proprietary runtime costs or hourly fees. It provides you with the orchestration code, but you have to provide the physical infrastructure. The SDK natively supports 7 sandbox providers (Blaxel, Cloudflare, Daytona, E2B, Modal, Runloop, and Vercel) and allows saving operation states on storage systems like S3, GCS, Azure Blob, and Cloudflare R2.

The total cost of ownership changes radically with this approach. If you choose an open-source agents SDK, you don’t pay framework licensing fees. However, you will incur processing costs for sandbox providers and cloud storage expenses to maintain agent memory. Furthermore, you will have to calculate the cost of the engineering time required to configure and maintain this distributed infrastructure.

This abundance of infrastructure options closely resembles the challenges of multi-cloud orchestration for organizations. The total cost for the company is not zero. But OpenAI deliberately chooses not to monetize the execution layer to drive pure consumption of its models.

The third way: Google and Microsoft’s granularity

Google and Microsoft are not standing by, but are proposing a third way based on service granularity. They have understood that enterprise companies might not want a closed package like Anthropic’s, but also don’t want to shoulder the total infrastructure burden required by OpenAI.

Google, with its Gemini Enterprise Agent Platform, has chosen to bill based on consumption for individual components, fragmenting the cost of orchestration based on what is actually used. Microsoft, with the Foundry Agent Service, applies pricing linked to the specific use of computationally intensive tools, such as the Code Interpreter. AWS follows this trend by preparing a Stateful Runtime Environment to manage long-term memory in collaboration with OpenAI and Bedrock AgentCore.

These giants confirm an unwritten rule of the new market: whoever controls the harness controls profit margins and the lock-in of the entire AI ecosystem.

The framework crisis and the internal team dilemma

Pressure on agnostic solutions

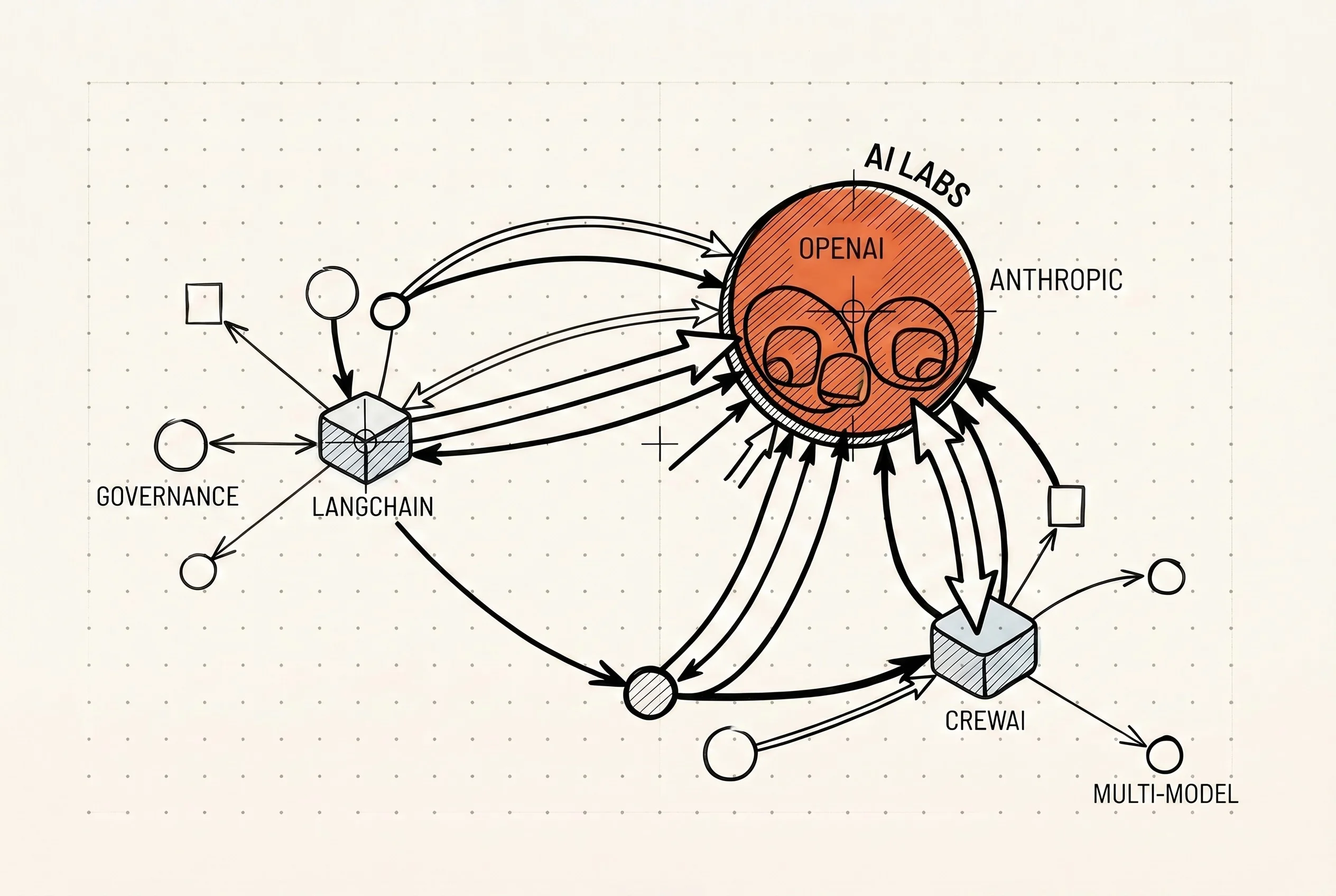

While artificial intelligence titans position their infrastructure pawns, the most violent impact is hitting the startup ecosystem. For months, horizontal frameworks thrived by filling exactly the void that major vendors had left open.

Take the case of orchestration frameworks designed to be agnostic to the underlying model. Tools like LangChain, CrewAI, and VoltAgent now find themselves in a position of extreme vulnerability. Curiously, VoltAgent is backed by heavy-hitting investors like Insight Partners, a fund that also invests in OpenAI and Anthropic.

Their main selling point has always been flexibility, promising to avoid ties to a single AI provider. But how do you sell an agnostic orchestration layer when the model creator gives you a native harness for free? Especially if this tool is open source, free, and perfectly optimized for its APIs.

The pressure is unsustainable. The promise of avoiding vendor lock-in loses its appeal when the third-party framework introduces latency or abstraction bugs. Often, these tools cannot keep up with the new features released by vendors, inevitably pushing development teams to prefer the native tool.

Survival niches

However, if the competition for basic orchestration seems lost, there are strategic exceptions for those who change the battlefield. Not all harness startups are destined to succumb.

Sycamore, for example, recently raised $65 million in a seed round led by Coatue and Lightspeed. The reason for this success does not lie in an attempt to compete on basic orchestration. They focused on a problem that major vendors do not want to solve, creating an operating system for enterprise AI focused on governance and multi-model control.

Large companies need unassailable audit trails. They require architectural strategies to mitigate the risks of non-deterministic systems and rigorous compliance guarantees. Sycamore survives because it sells independence and security at the enterprise level, not just developer convenience.

This market dynamic leads us directly to the heart of the problem for structured companies. Faced with the crisis of horizontal frameworks, the classic objection from IT departments re-emerges with force. Many think that if external frameworks are at risk and managed services create lock-in, it is better to build the harness in-house. In the context of generative artificial intelligence, this is a death trap.

The real cost is not on the invoice: the lesson of cloud computing

The end of the flat-rate illusion

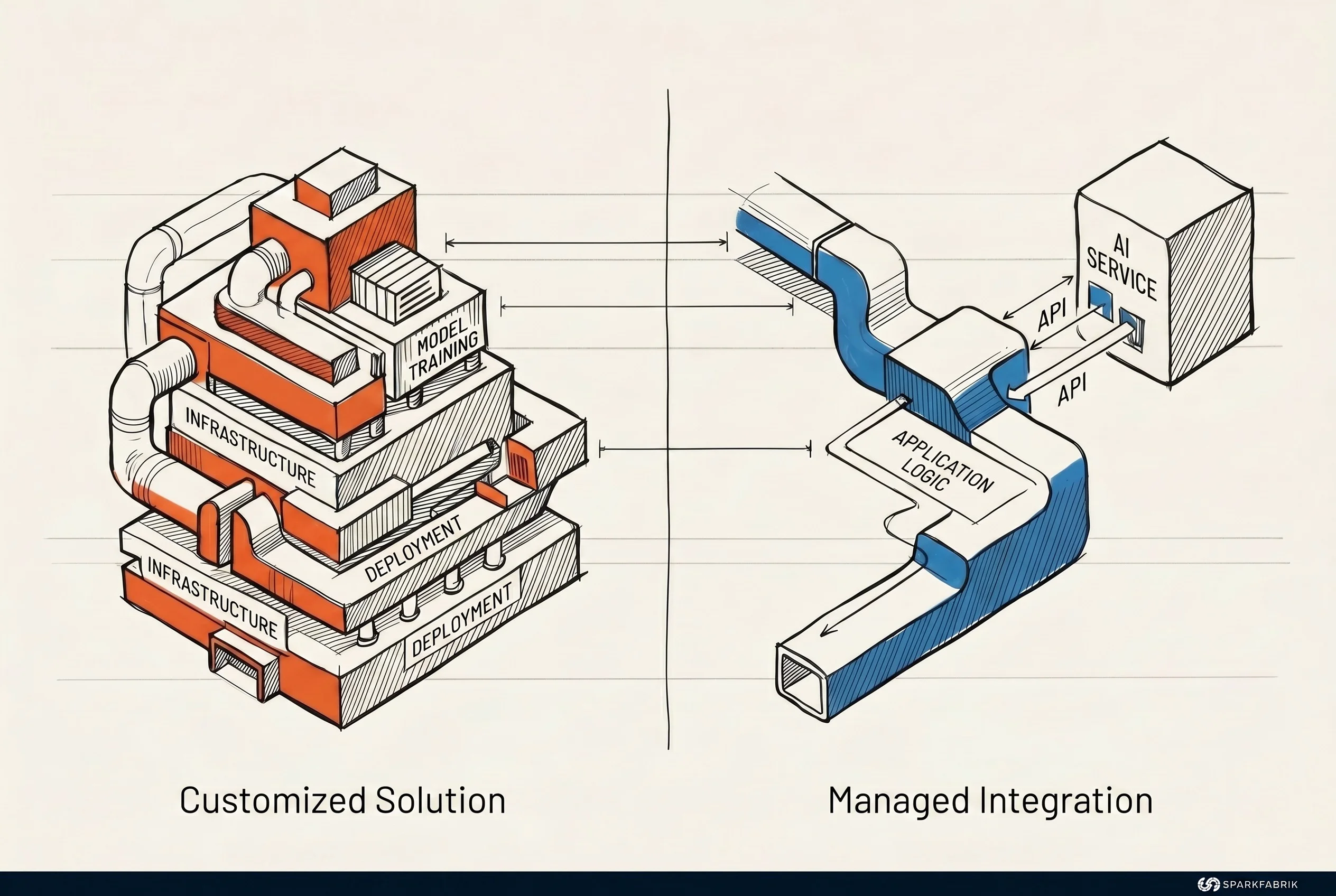

The calculation between building an internal solution and buying one from the market has been enriched by two new benchmarks. On one hand, the convenience benchmark: Anthropic offers you complete infrastructure for $0.08 an hour. On the other, the supervision benchmark: OpenAI gives you the base architecture and lets you choose where to run it.

If you think execution and orchestration costs are a secondary problem that can be managed internally, look at what is happening to market leaders. Microsoft recently announced that from June 2026, GitHub Copilot will abandon the flat subscription model to move to usage-based billing based on AI Credits.

The reason is purely mathematical. Inference and autonomous session orchestration costs have become unsustainable with a flat fee. If a giant like Microsoft has to pass execution costs to the end user through a consumption-based system, thinking you can economically manage custom AI infrastructure within a normal company is a financial gamble.

For teams still in the prototyping phase, justifying building an orchestration infrastructure from scratch has become indefensible. Building memory, managing containers for code, and orchestrating tools was considered differentiating work. Today, it is a commodity accessible through a simple API call or by downloading a free SDK.

For teams still in the prototyping phase, justifying building an orchestration infrastructure from scratch has become indefensible. Building memory, managing containers for code, and orchestrating tools was considered differentiating work. Today, it is a commodity accessible through a simple API call or by downloading a free SDK.

The hardest impact is for teams that already have systems in production. The typical objection is that the internal harness is perfectly optimized for the company’s specific workloads. The reality is much harsher:

An internal team of a few engineers cannot physically compete with the research budgets of four frontier vendors. Maintaining that custom system will become progressively slower and immensely more expensive.

Furthermore, it will become a nightmare for recruiting. What talented engineer will want to work on maintaining a legacy internal harness when the rest of the world uses market standards?

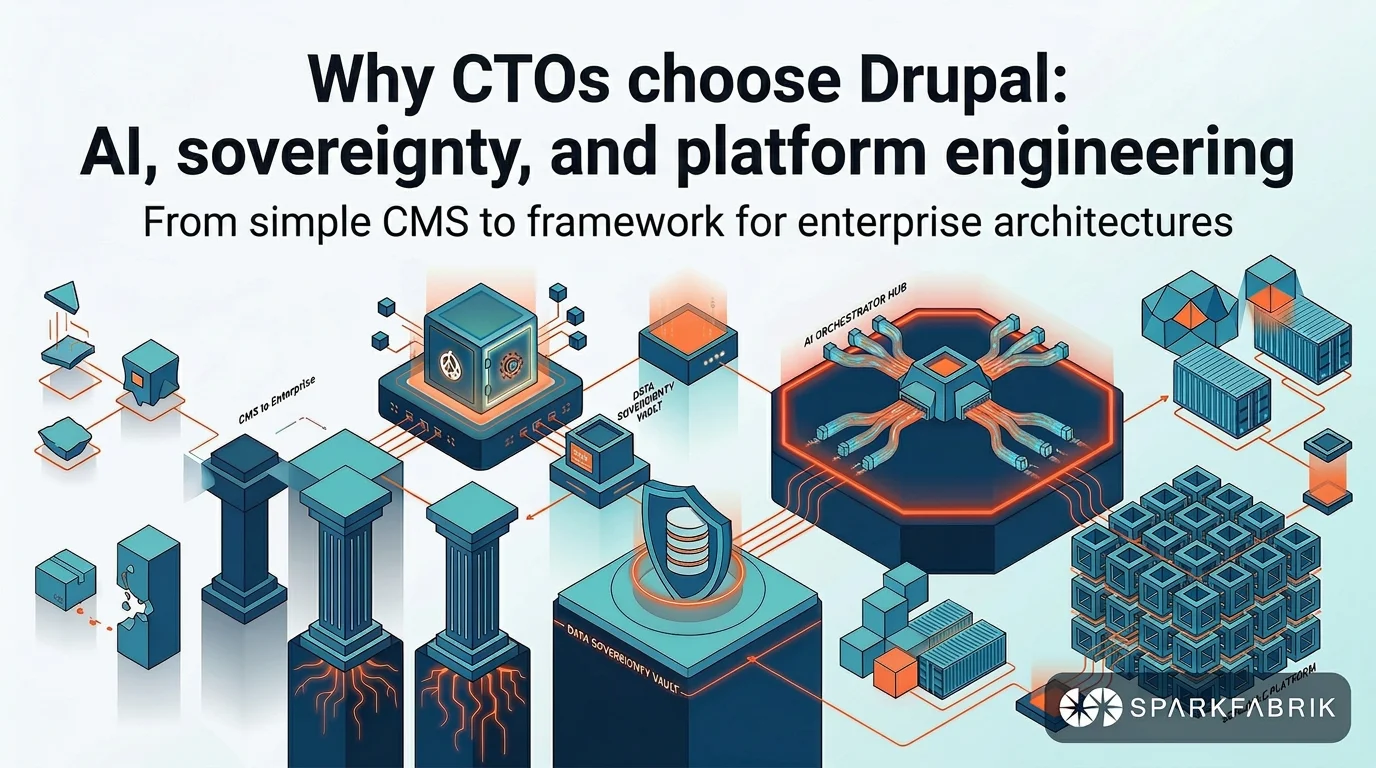

The historical parallel with cloud infrastructure

We have already seen this movie. The history of cloud infrastructure teaches us exactly how it will end.

When cloud computing became the standard, the market was not absorbed by a single monolithic solution. Terraform remained a dominant open-source standard even when AWS pushed its managed service, CloudFormation, heavily. Similarly, we saw the potential of open source in orchestrating scalable infrastructure establish itself with Kubernetes. It became the de facto standard, forcing AWS, Google, and Microsoft to offer managed services based on it.

The AI harness market will divide along the same geological fault line. Open source will not kill managed services, and managed services will not eliminate open source. They will coexist because they cater to fundamentally different buyer profiles.

Companies that prioritize time-to-market will gravitate toward solutions like Anthropic Managed Agents. Organizations that need granular administration will adopt architectures based on the OpenAI SDK or specialized frameworks.

The real lesson is clear. The harness, which until yesterday was supposed to be the defensive moat for many companies, has effectively become basic infrastructure.

Conclusion

Janakiram MSV’s analysis highlights an uncomfortable truth for the software industry. AI agent orchestration infrastructure is no longer the element that differentiates your product in the market. Tech giants have decided that the “chassis” must be standardized, using price leverage to impose their architectures, from hourly subscriptions to open-source gifts.

Building this infrastructure from scratch within your company means accumulating technical debt that will soon become unsustainable. The real decision today is not determining which language model is marginally more intelligent in a synthetic benchmark. The critical decision is choosing which orchestration architecture you want to tie your software’s operational destiny to.

We are witnessing a paradigm shift toward an agentic-first approach. The value no longer lies in building the infrastructure pipes, but in how artificial intelligence orchestrates real business processes. Choosing the wrong harness today means spending the next five years doing plumbing maintenance, while your competitors invent the future.